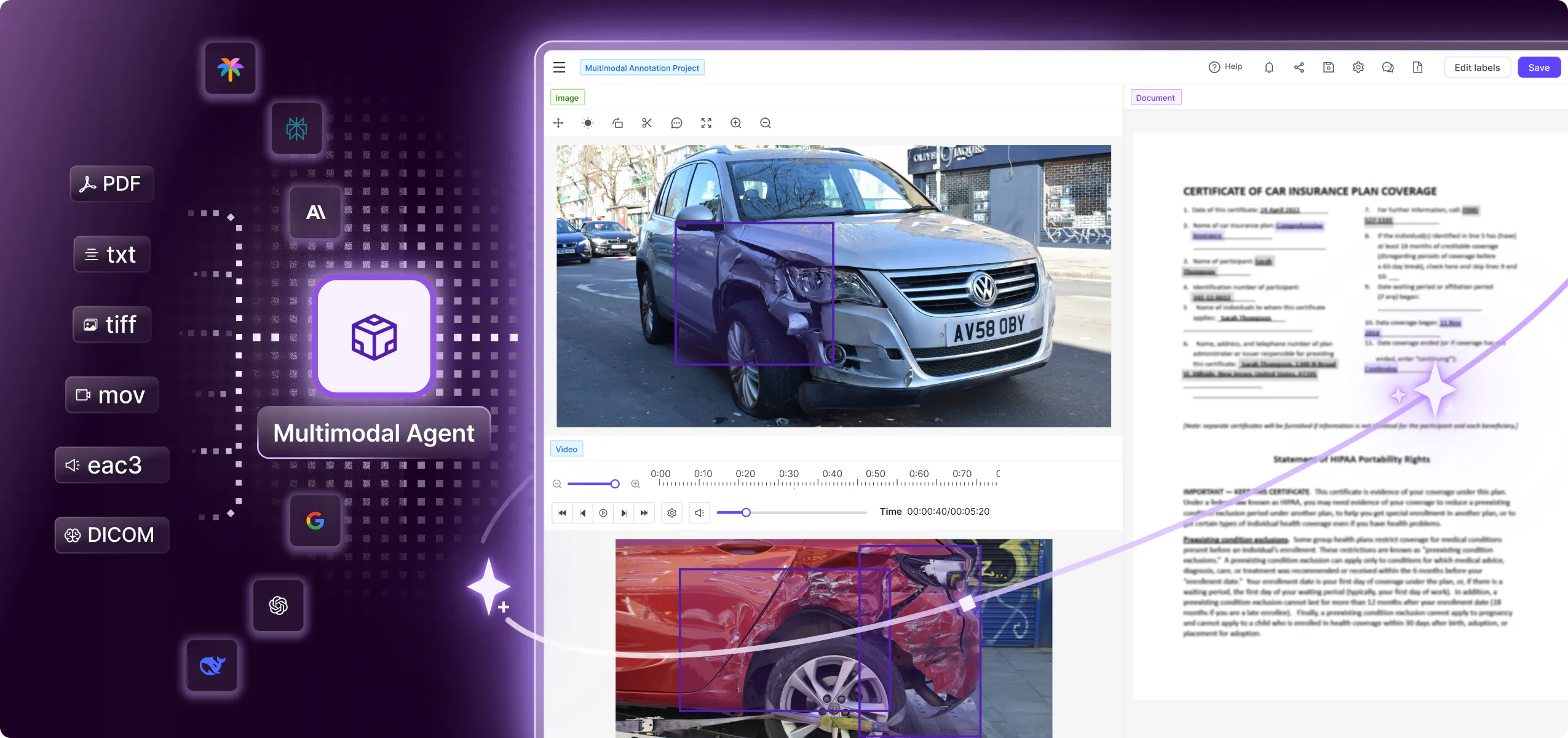

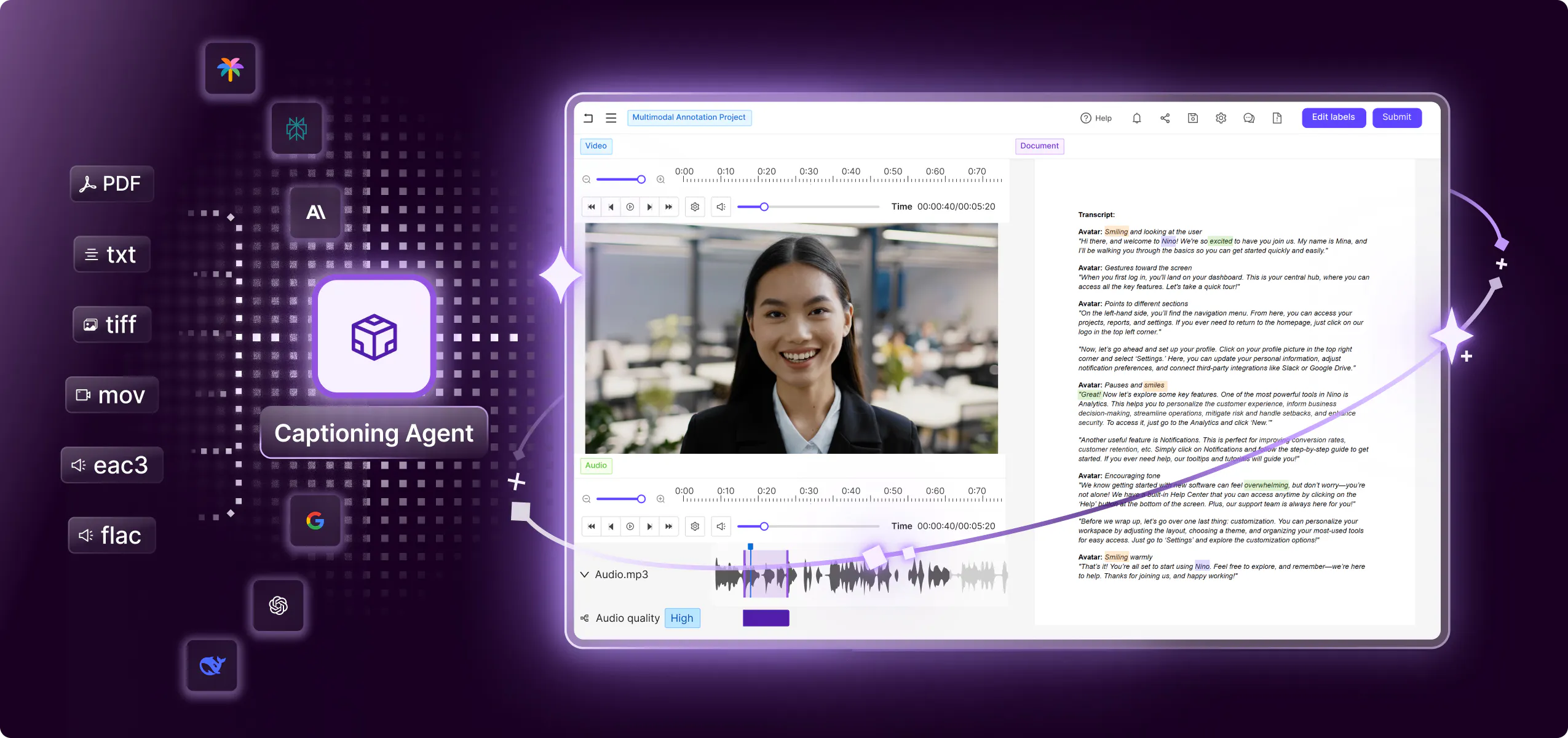

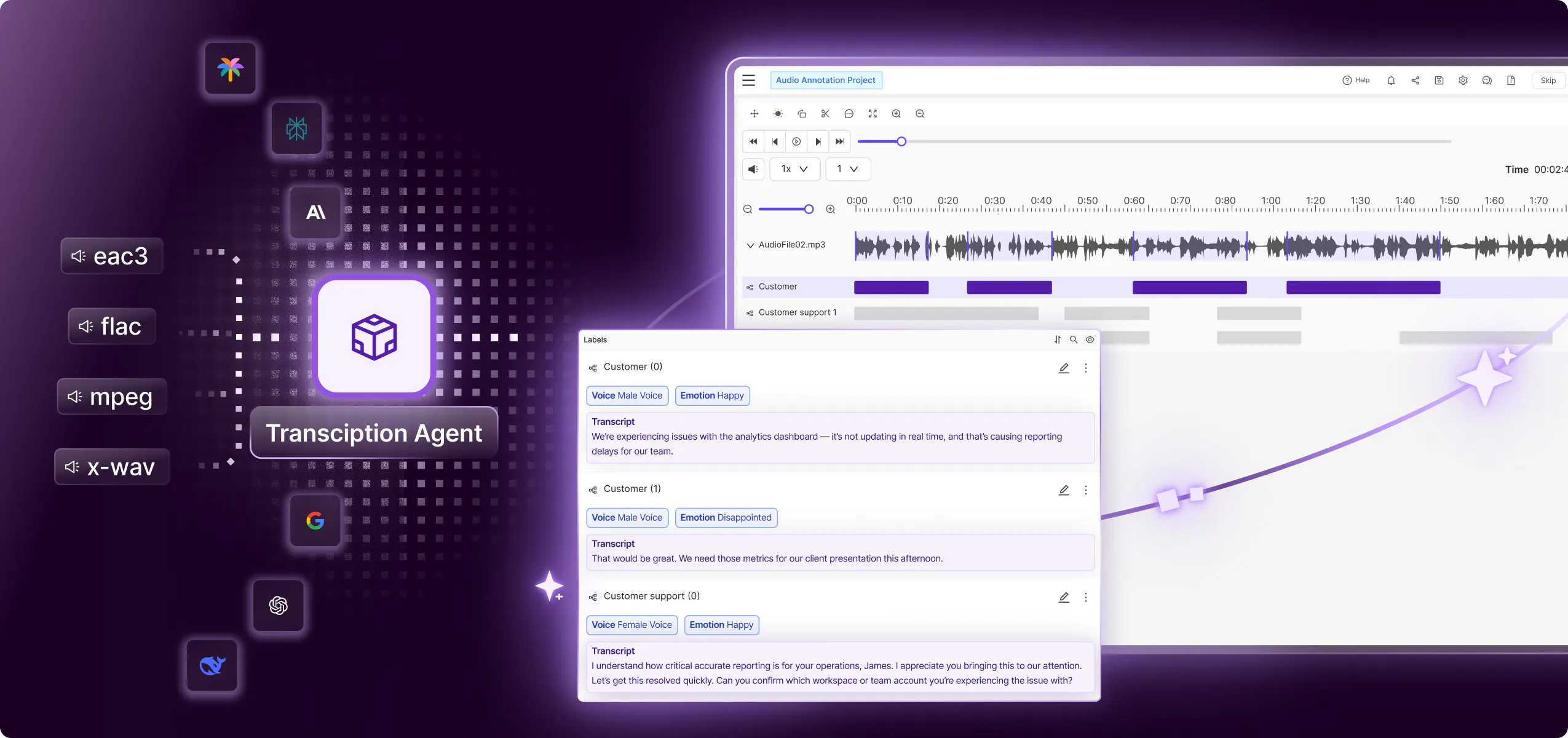

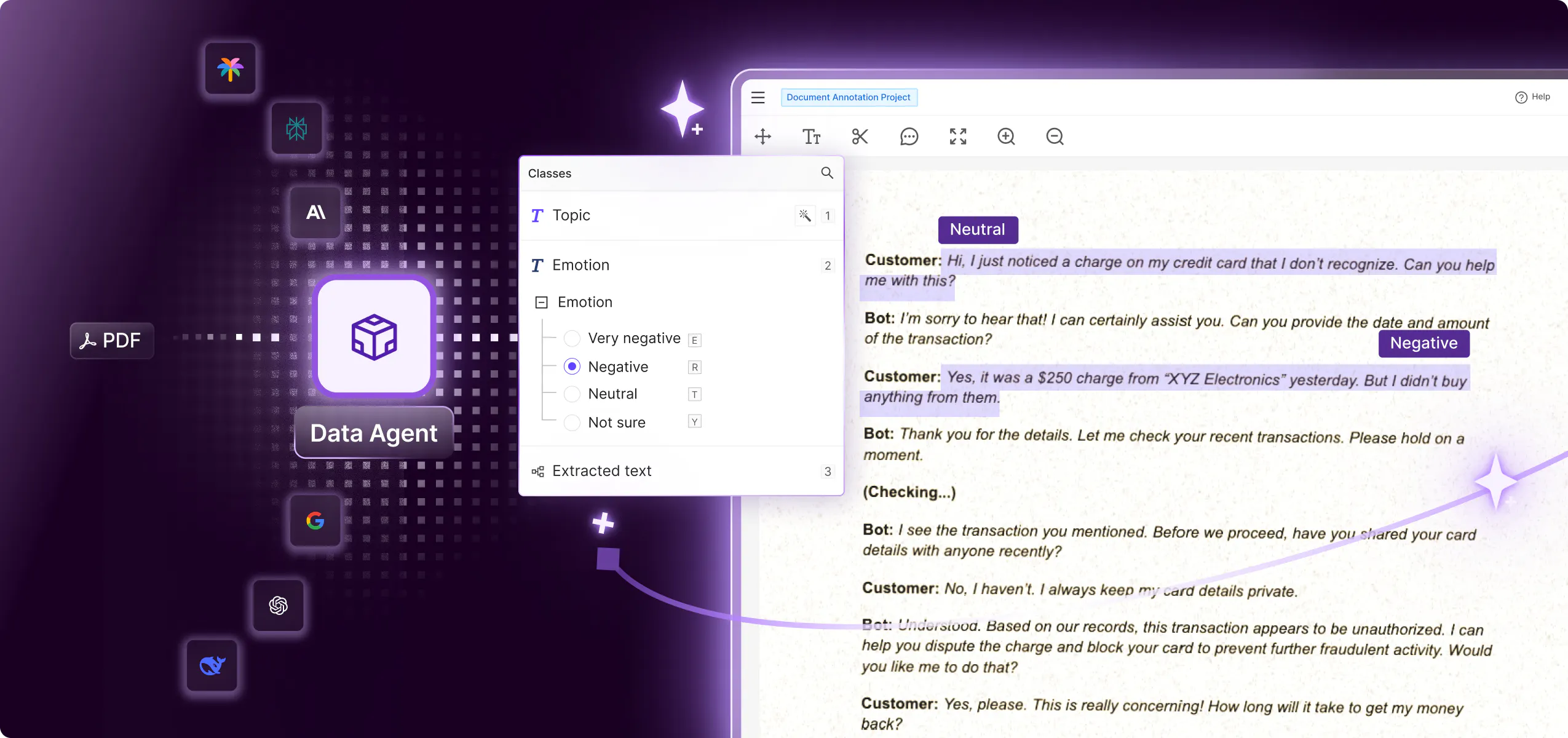

Automate your AI data pipelines with Agents

Efficiently integrate humans, SOTA models, and your own models into data workflows to reduce the time taken to achieve high-quality multimodal datasets at scale.

Fast-track AI development with better data

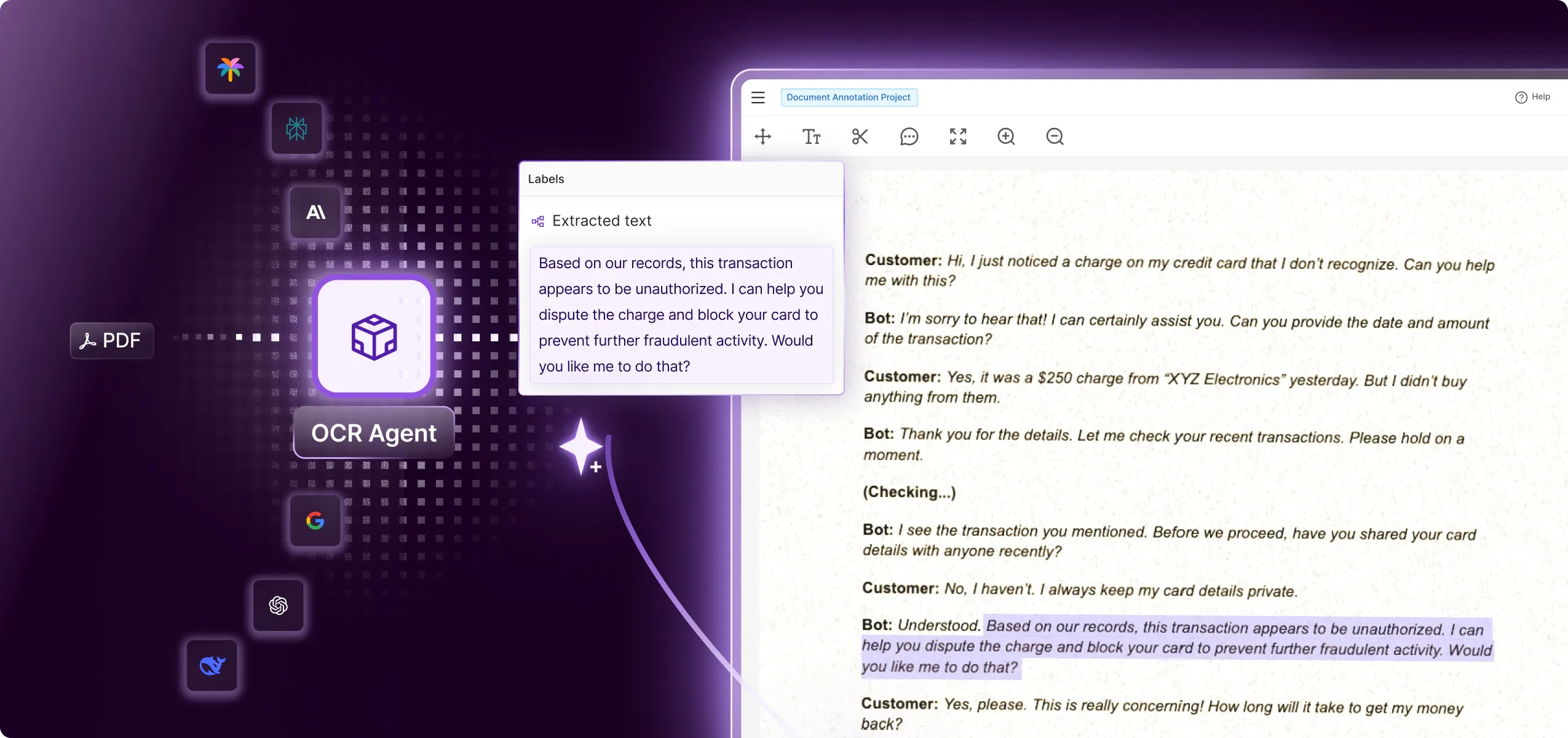

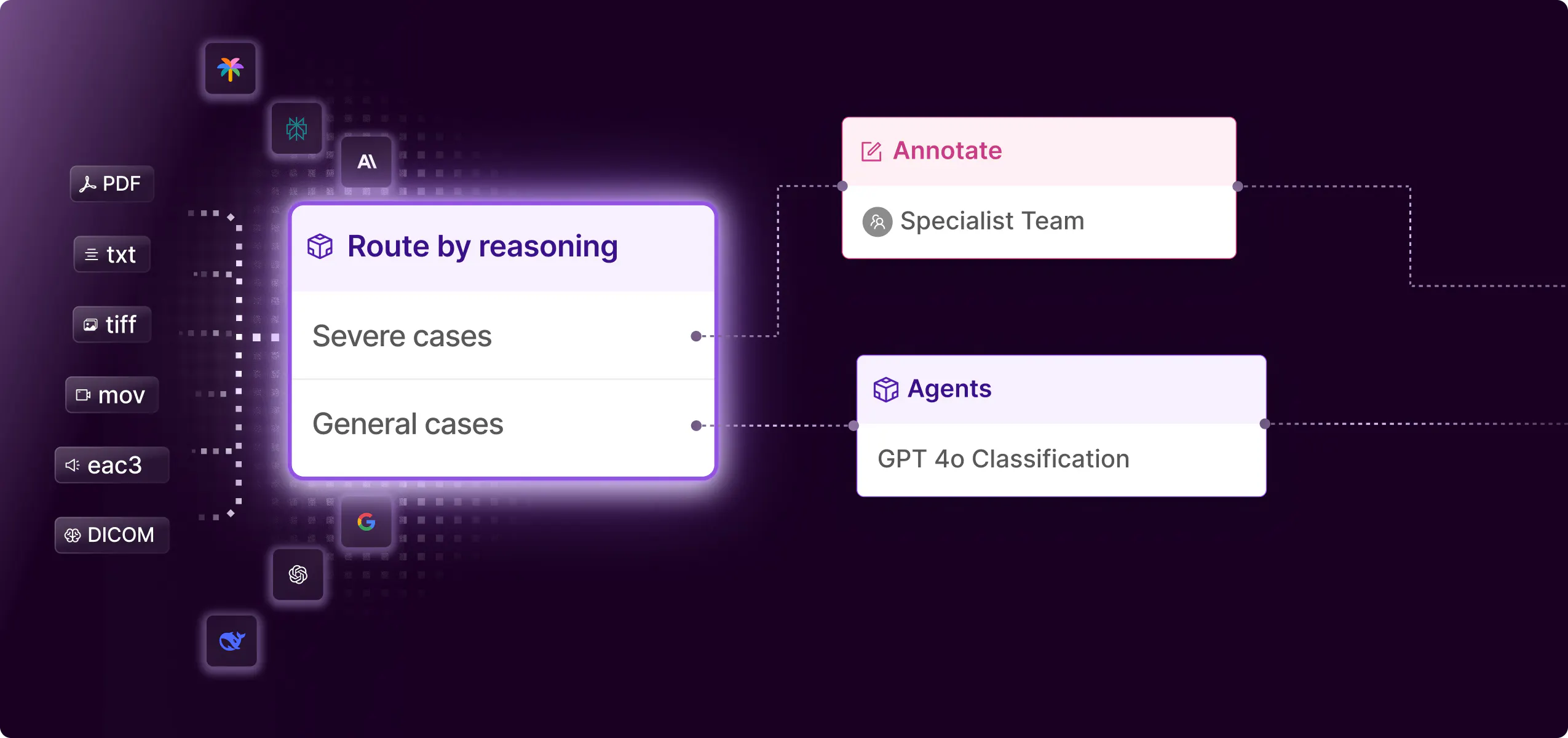

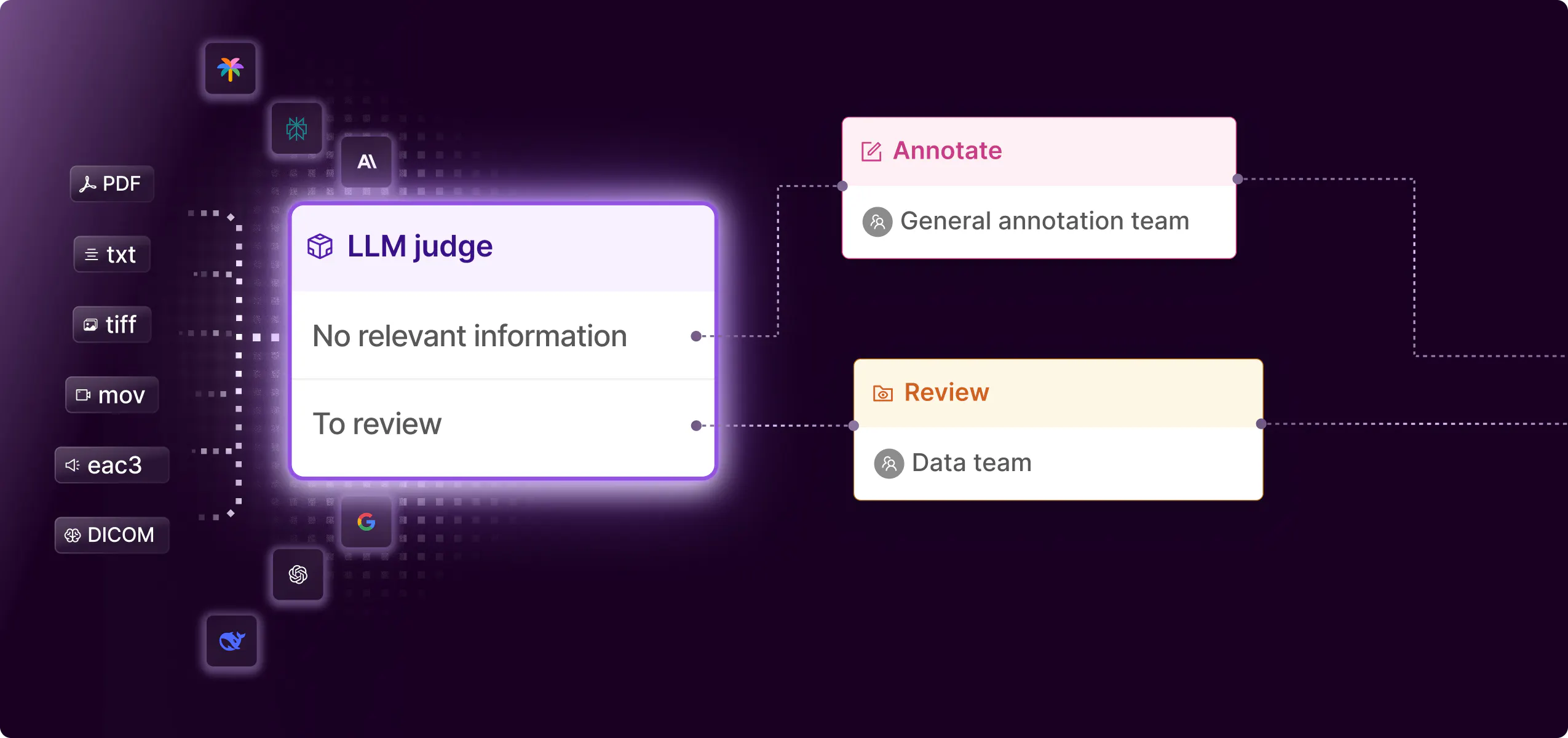

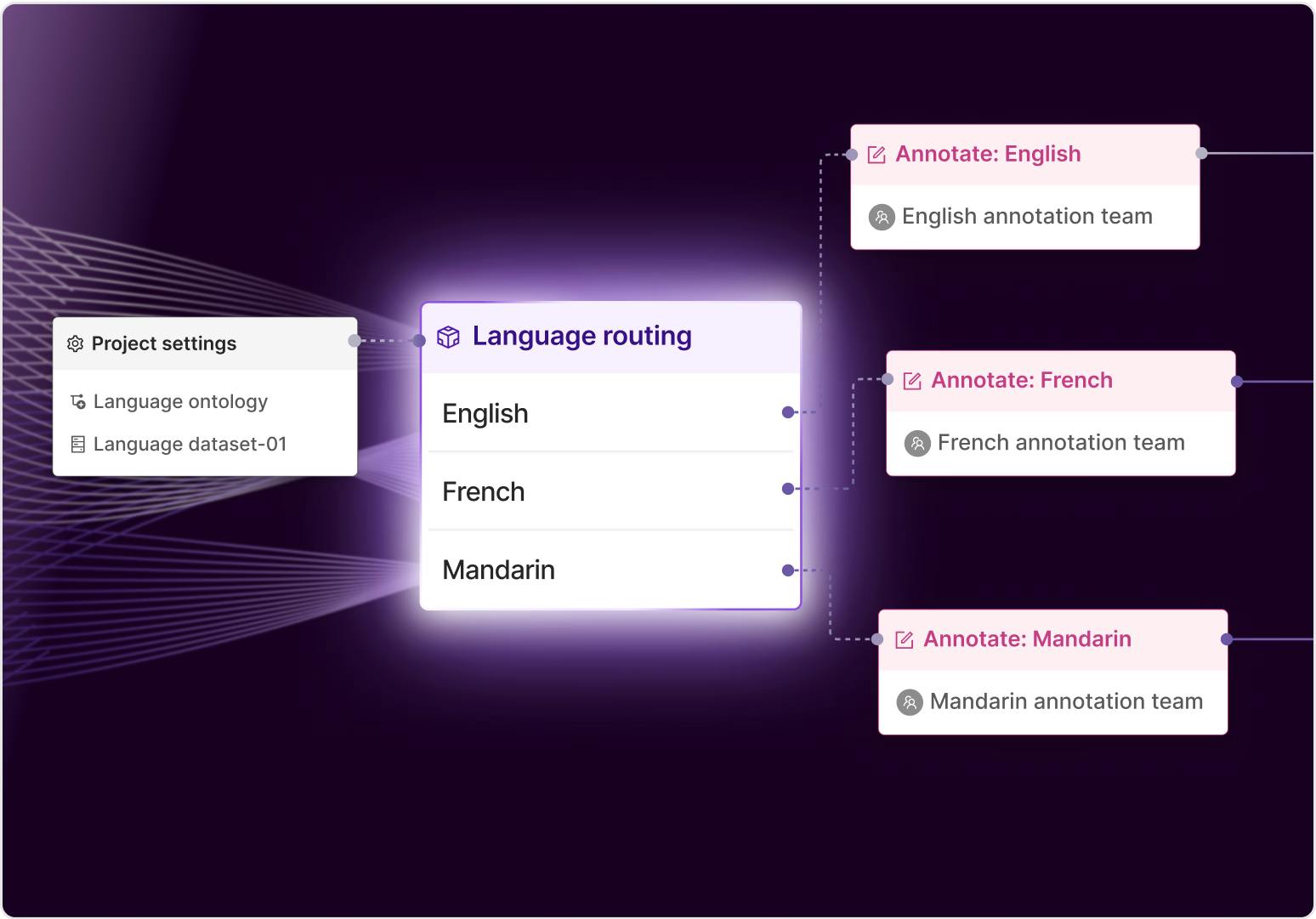

Integrate the right AI model to automate data workflows

Flexibly integrate your own model or SOTA foundation models to enable accurate data preparation, fast. Automate any data task such as pre-labeling, routing by reasoning, evaluation and more.

Scale data pipelines without scaling headcount

Orchestrate bulk data labeling with AI to future-proof your data workflows to effectively handle large data volumes. Integrate HITL QA to maintain data quality and label accuracy.

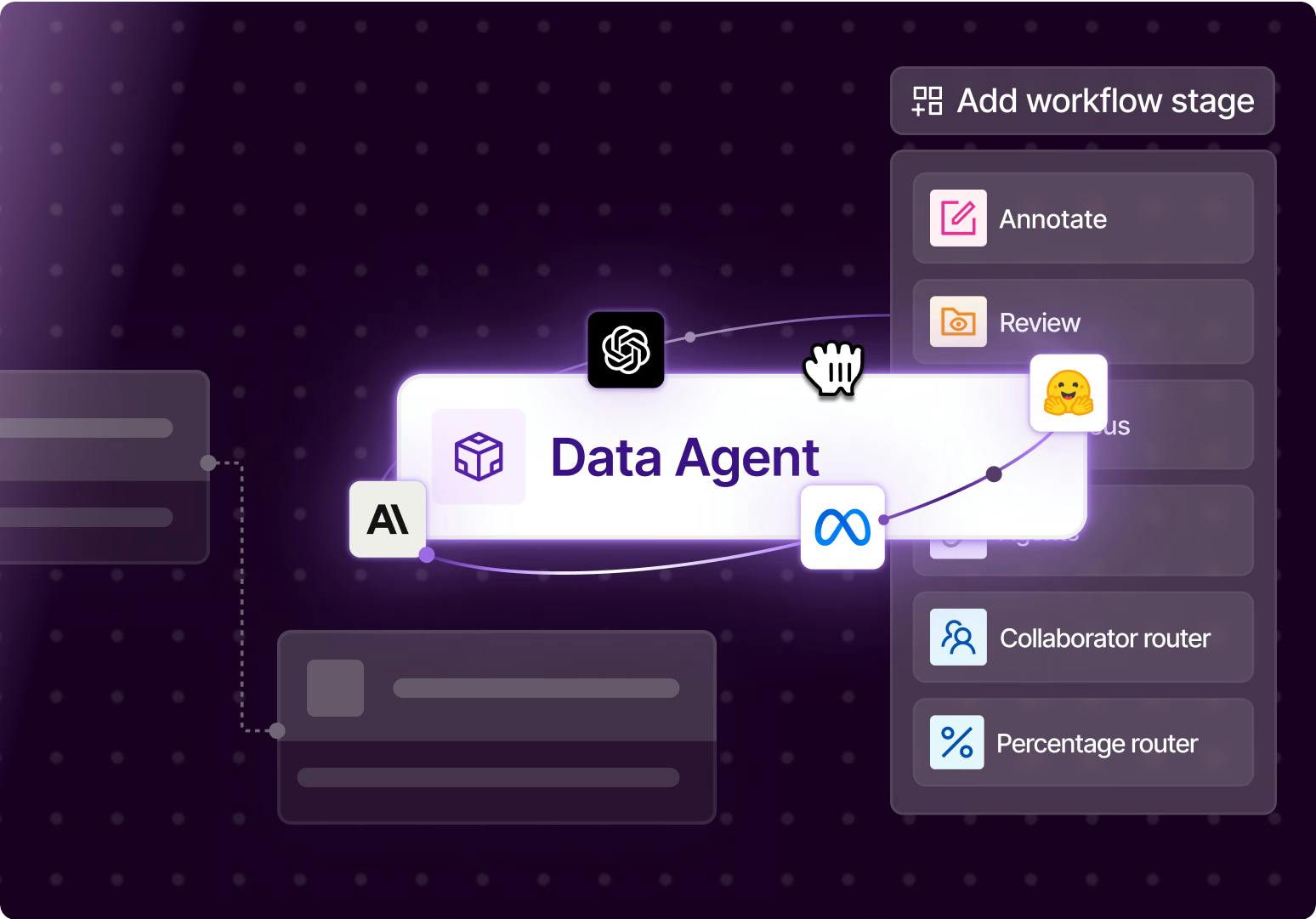

Save 1000s of hours, automate any data task

Build multi-step workflows that unite AI models, labeling teams, and reviewers to produce high-quality labeled data. Maintain visibility of annotation progress across multiple teams.

Models

Integrate any SOTA Foundational Model into your data workflow

Enterprise-grade.

Built for scale.

Designed for reliable AI.

Built for scale.

Designed for reliable AI.

API/SDK-first. Zero data migration. Your data stays in your cloud.

Visit trust center

Get the data right

300+ of the best AI teams in the world use Encord. Join them.