Few Shot Learning

Encord Computer Vision Glossary

What is Few Shot Learning?

Few-shot learning is a subfield of machine learning that originated from the broader field of supervised learning. It addresses the challenge of training models to make accurate predictions or classifications when provided with very limited labeled data. While traditional machine learning typically requires large datasets for training, few-shot learning explores methods that enable models to learn effectively from a small number of examples.

Applications

Few-shot learning has diverse applications across various domains, including:

- Medical Imaging: Assisting in the diagnosis of rare diseases or medical conditions by learning from limited patient data.

- Recommendation Systems: Personalizing content recommendations for users with sparse historical interaction data.

- Anomaly Detection: Identifying anomalies or outliers in data with only a small set of labeled anomalies.

- Image Recognition: Recognizing new species of animals or identifying rare plants in wildlife monitoring with limited training images.

- Text Summarization: Automatically generating concise summaries of articles or documents with only a few reference summaries for training.

- Language Understanding: Training chatbots or virtual assistants to understand and respond to user queries in new languages or domains.

Significance

Few-shot learning is significant for several reasons:

- Reduced Data Requirements: It reduces the data annotation burden, making it feasible to tackle tasks with limited labeled data, which is common in many real-world scenarios.

- Adaptability: Few-shot learning enables models to adapt quickly to new concepts, objects, or languages without extensive retraining.

- Generalization: Models developed through few-shot learning often exhibit strong generalization capabilities, allowing them to perform well on unseen data points.

- Cost Savings: Requiring fewer labeled examples, can lead to significant cost savings in data collection and annotation.

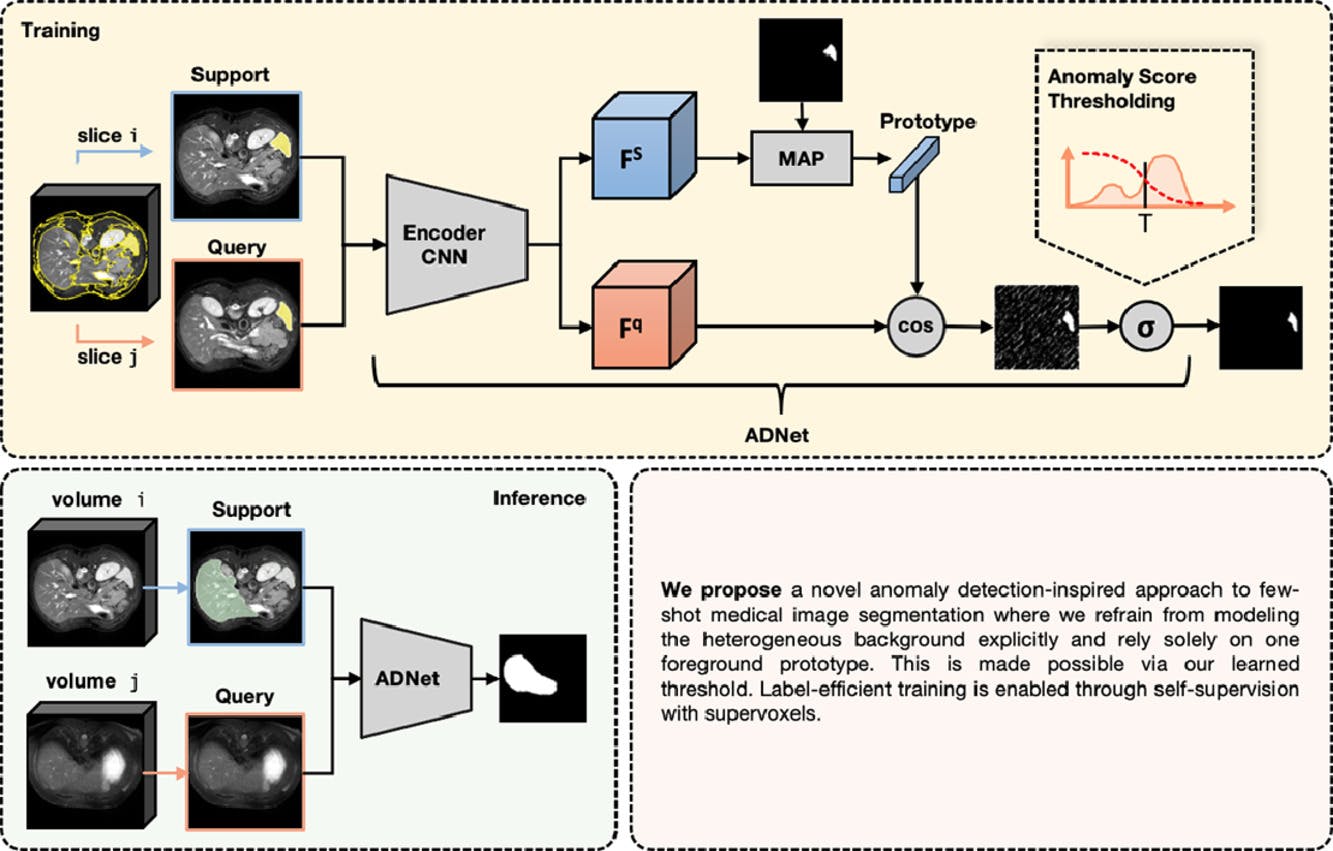

Anomaly detection-inspired Few-Shot Medical Image Segmentation

Limitations of Few Shot Learning

Few-shot learning is a powerful approach but has its limitations:

- Overfitting: With only a few examples per class, models can easily overfit the training data, memorizing specific examples rather than learning generalizable patterns.

- Difficulty with Variability: Few-shot learning may struggle when dealing with high intra-class variability, as it may not have enough examples to capture the full range of variations within a class.

- Complexity: Some few-shot learning techniques, especially those based on meta-learning, can be computationally intensive and challenging to implement effectively.

- Performance Gap: While few-shot learning can achieve impressive results in certain scenarios, there may still be a performance gap compared to traditional supervised learning when sufficient data is available.

- Transferability: The transferability of few-shot learned knowledge to entirely new tasks or domains can be limited, requiring significant adaptation or retraining for different contexts.

Despite these limitations, few-shot learning remains a valuable approach for tasks with limited data and continues to be an active area of research to address these challenges.

Few Shot Learning: Key Takeaways

In conclusion, few-shot learning is a cutting-edge field in machine learning that addresses the challenge of learning from very limited labeled data. Its applications span numerous domains, from computer vision to natural language processing, offering solutions to tasks where data availability is a bottleneck. The significance of few-shot learning lies in its potential to make machine learning more adaptable, cost-effective, and applicable to a broader range of real-world problems, ultimately advancing the capabilities of AI systems.