Insights for AI Builders

Encord Blog

All articles

415 of 415 articles

Encord Integrates NVIDIA Cosmos Reason and Embed

Agents

Jun 03 2026

The Complete Guide to Data Labeling for Robotics

Data Annotation

May 26 2026

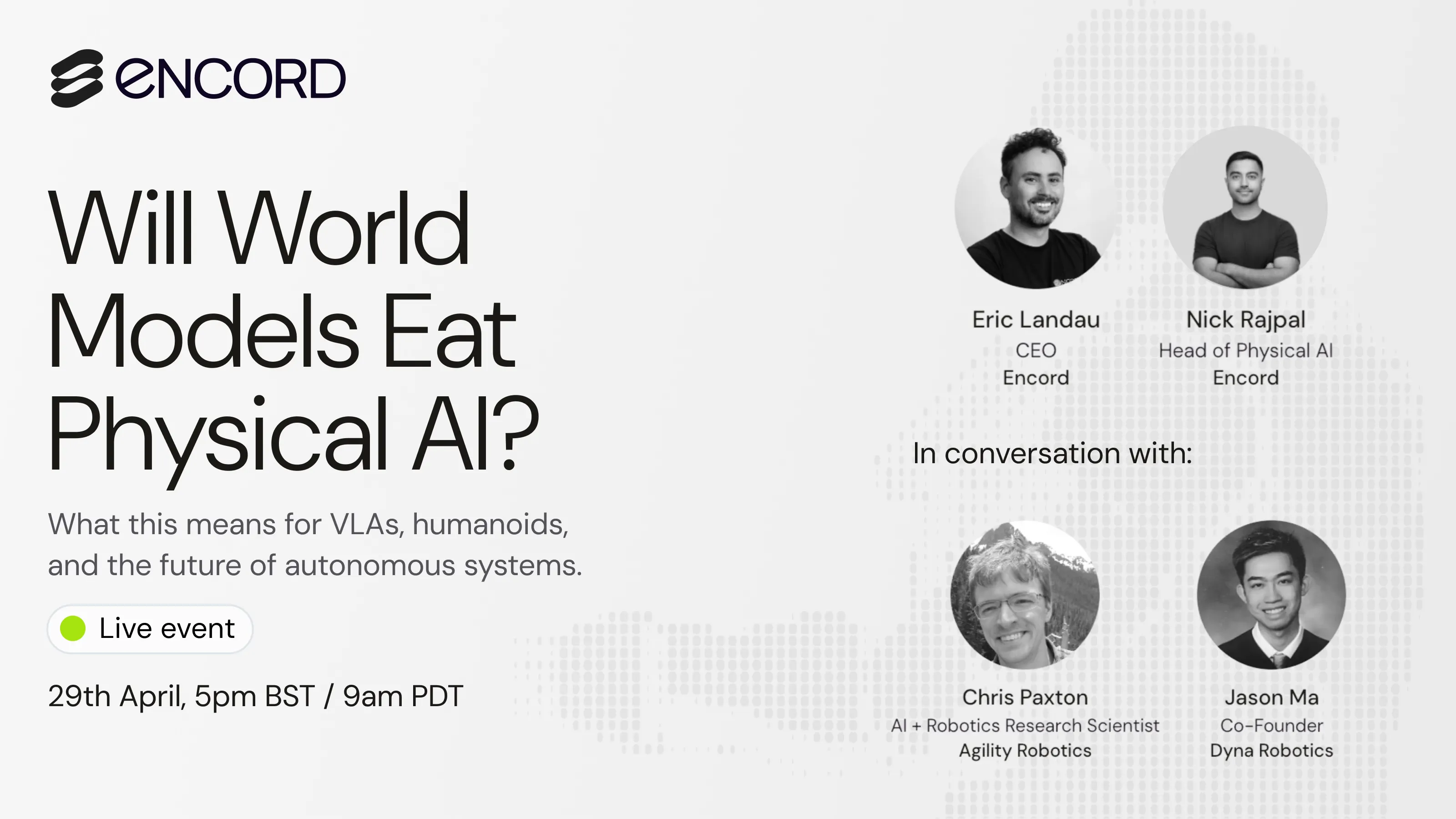

Will World Models Eat Physical AI?: What We Learned from Our Physical AI Panel

Physical AI

Apr 30 2026

What Does It Actually Take to Trust a Robot in Production? Warehouse Robotics Roundtable Recap

Physical AI

Apr 24 2026

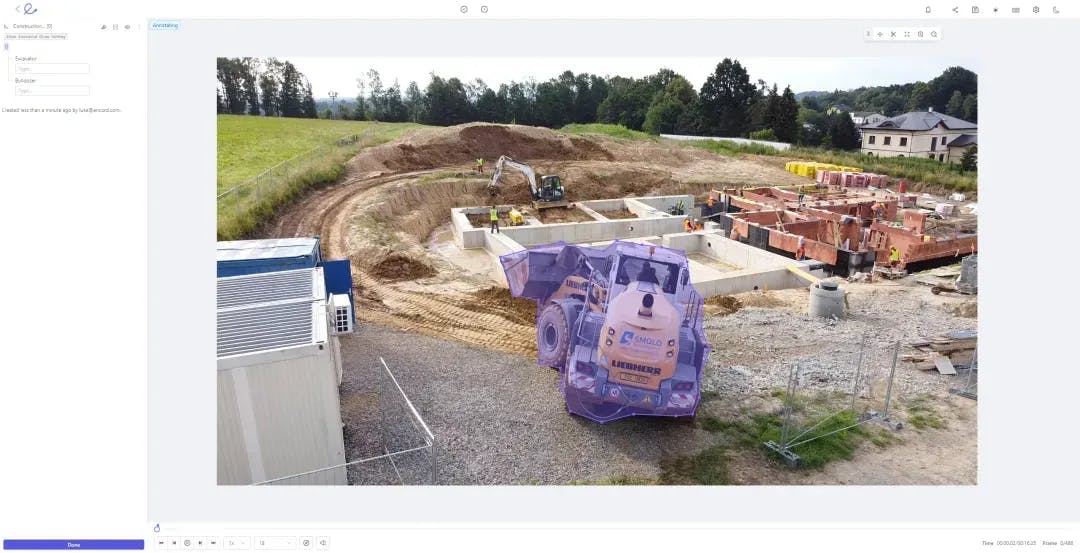

How to Automate Video Annotation for Machine Learning

Computer Vision

Apr 15 2026The Complete Guide to Data Annotation [2026 Review]

Data Operations

Apr 08 2026

Why High-Stakes AI Lives or Dies on Data Quality: Encord x Mantyx Webinar Recap

Computer Vision

Mar 30 2026

The Full Guide to Video Annotation for Computer Vision

Data Annotation

Mar 19 2026![[Live Event Recap] Introducing the Encord Agents Catalog: Deploy AI Agents in Minutes](https://images.prismic.io/encord/abqfybbci2UF6L4f_MarchmasterclassResizedforLI.png?auto=format%2Ccompress&fit=max)

[Live Event Recap] Introducing the Encord Agents Catalog: Deploy AI Agents in Minutes

AI Agents

Mar 18 2026

The Complete Guide to Data Curation for Computer Vision

Computer Vision

Mar 10 2026

The Complete Guide to Image Annotation for Computer Vision

Computer Vision

Mar 10 2026

Part 1: Evaluating Foundation Models (CLIP) using Encord Active

Machine Learning

Mar 06 2026