Top 10 Open Source Computer Vision Repositories

Product Manager at Encord

In this article, you will learn about the top 10 open-source Computer Vision repositories on GitHub. We discuss repository formats, their content, key learnings, and proficiency levels the repo caters to.

The goal is to guide researchers, practitioners, and enthusiasts interested in exploring the latest advancements in Computer Vision. You will gain insights into the most influential open-source CV repositories to stay up-to-date with cutting-edge technology and potentially incorporate these resources into your projects.

Readers can expect a comprehensive overview of the top Computer Vision repositories, including detailed descriptions of their features and functionalities.

The article will also highlight key trends and developments in the field, offering valuable insights for those looking to enhance their knowledge and skills in Computer Vision.

Here’s a list of the repositories we’re going to discuss:

- Awesome Computer Vision

- Segment Anything Model (SAM)

- Visual Instruction Tuning (LLaVA)

- LearnOpenCV

- Papers With Code

- Microsoft ComputerVision recipes

- Awesome-Deep-Vision

- Awesome transformer with ComputerVision

- CVPR 2023 Papers with Code

- Face Recognition

Factors to Evaluate a Github Repository’s Health

Before we list the top repositories for Computer Vision (CV), it is essential to understand how to determine a GitHub repository's health. The list below highlights a few factors you should consider to assess a repository’s reliability and sustainability:

- Level of Activity: Assess the frequency of updates by checking the number of commits, issues resolved, and pull requests.

- Contribution: Check the number of developers contributing to the repository. A large number of contributors signifies diverse community support.

- Documentation: Determine documentation quality by checking the availability of detailed readme files, support documents, tutorials, and links to relevant external research papers.

- New Releases: Examine the frequency of new releases. A higher frequency indicates continuous development.

- Responsiveness: Review how often the repository authors respond to issues raised by users. High responsiveness implies that the authors actively monitor the repository to identify and fix problems.

- Stars Received: Stars on GitHub indicate a repository's popularity and credibility within the developer community. Active contributors often attract more stars, showcasing their value and impact.

Top 10 GitHub Repositories for Computer Vision (CV)

Open source repositories play a crucial role in CV by providing a platform for researchers and developers to collaborate, share, and improve upon existing algorithms and models.

These repositories host codebases, datasets, and documentation, making them valuable resources for enthusiasts, developers, engineers, and researchers. Let us delve into the top 10 repositories available on GitHub for use in Computer Vision.

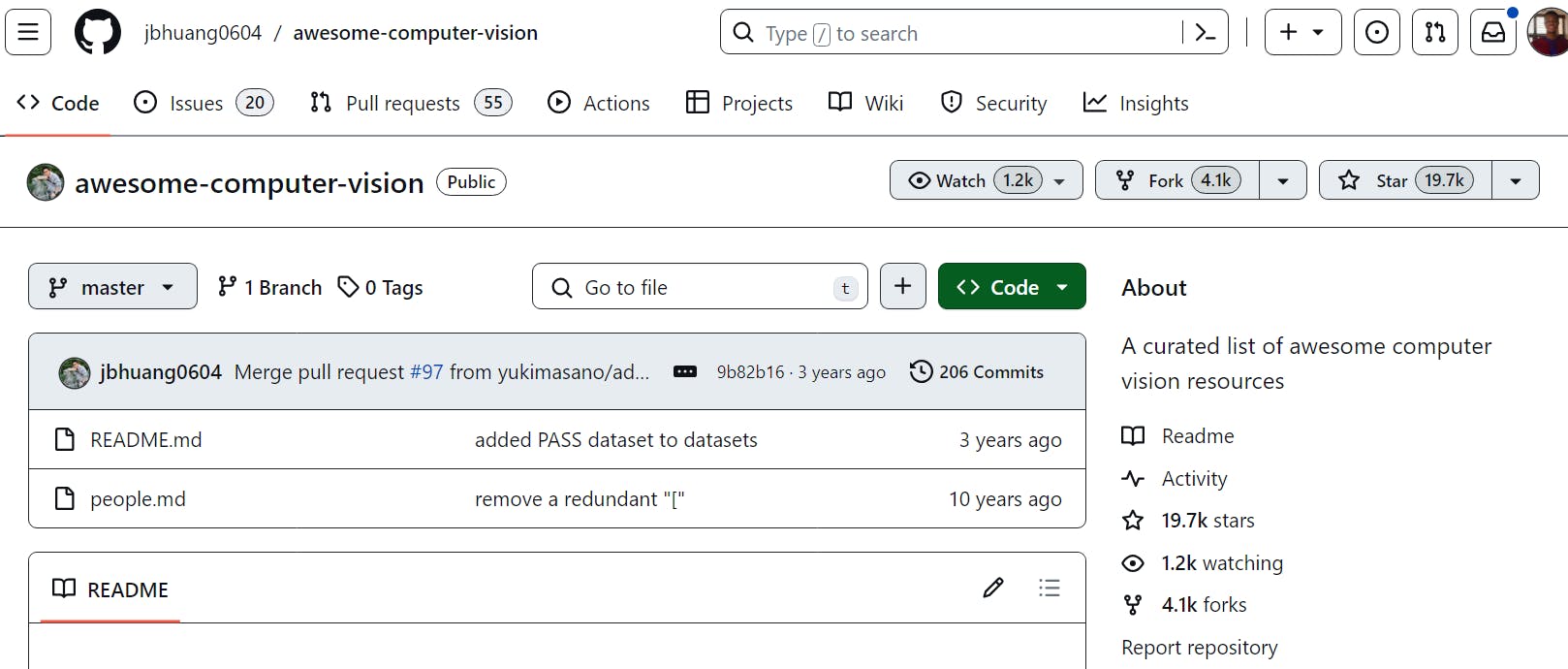

#1 Awesome Computer Vision

The awesome-php project inspired the Awesome Computer Vision repository, which aims to provide a carefully curated list of significant content related to open-source Computer Vision tools.

Awesome Computer Vision Repository

Repository Format

You can expect to find resources on image recognition, object detection, semantic segmentation, and feature extraction. It also includes materials related to specific Computer Vision applications like facial recognition, autonomous vehicles, and medical image analysis.

Repository Contents

The repository is organized into various sections, each focusing on a specific aspect of Computer Vision.

- Books and Courses: Classic Computer Vision textbooks and courses covering foundational principles on object recognition, computational photography, convex optimization, statistical learning, and visual recognition.

- Research Papers and Conferences: This section covers research from conferences published by CVPapers, SIGGRAPH Papers, NIPS papers, and survey papers from Visionbib.

- Tools: It includes annotation tools such as LabelME and specialized libraries for feature detection, semantic segmentation, contour detection, nearest-neighbor search, image captioning, and visual tracking.

- Datasets: PASCAL VOC dataset, Ground Truth Stixel dataset, MPI-Sintel Optical Flow dataset, HOLLYWOOD2 Dataset, UCF Sports Action Data Set, Image Deblurring, etc.

- Pre-trained Models: CV models used to build applications involving license plate detection, fire, face, and mask detectors, among others.

- Blogs: OpenCV, Learn OpenCV, Tombone's Computer Vision Blog, Computer Vision for Dummies, Andrej Karpathy’s blog, Computer Vision Basics with Python Keras, and OpenCV.

Key Learnings

- Visual Computing: Use the repo to understand the core techniques and applications of visual computing across various industries.

- Convex Optimization: Grasp this critical mathematical framework to enhance your algorithmic efficiency and accuracy in CV tasks.

- Simultaneous Localization and Mapping (SLAM): Explore the integration of SLAM in robotics and AR/VR to map and interact with dynamic environments.

- Single-view Spatial Understanding: Learn about deriving 3D insights from 2D imagery to advance AR and spatial analysis applications.

- Efficient Data Searching: Leverage nearest neighbor search for enhanced image categorization and pattern recognition performance.

- Aerial Image Analysis: Apply segmentation techniques to aerial imagery for detailed environmental and urban assessment.

Proficiency Level

- Aimed at individuals with an intermediate to advanced understanding of Computer Vision.

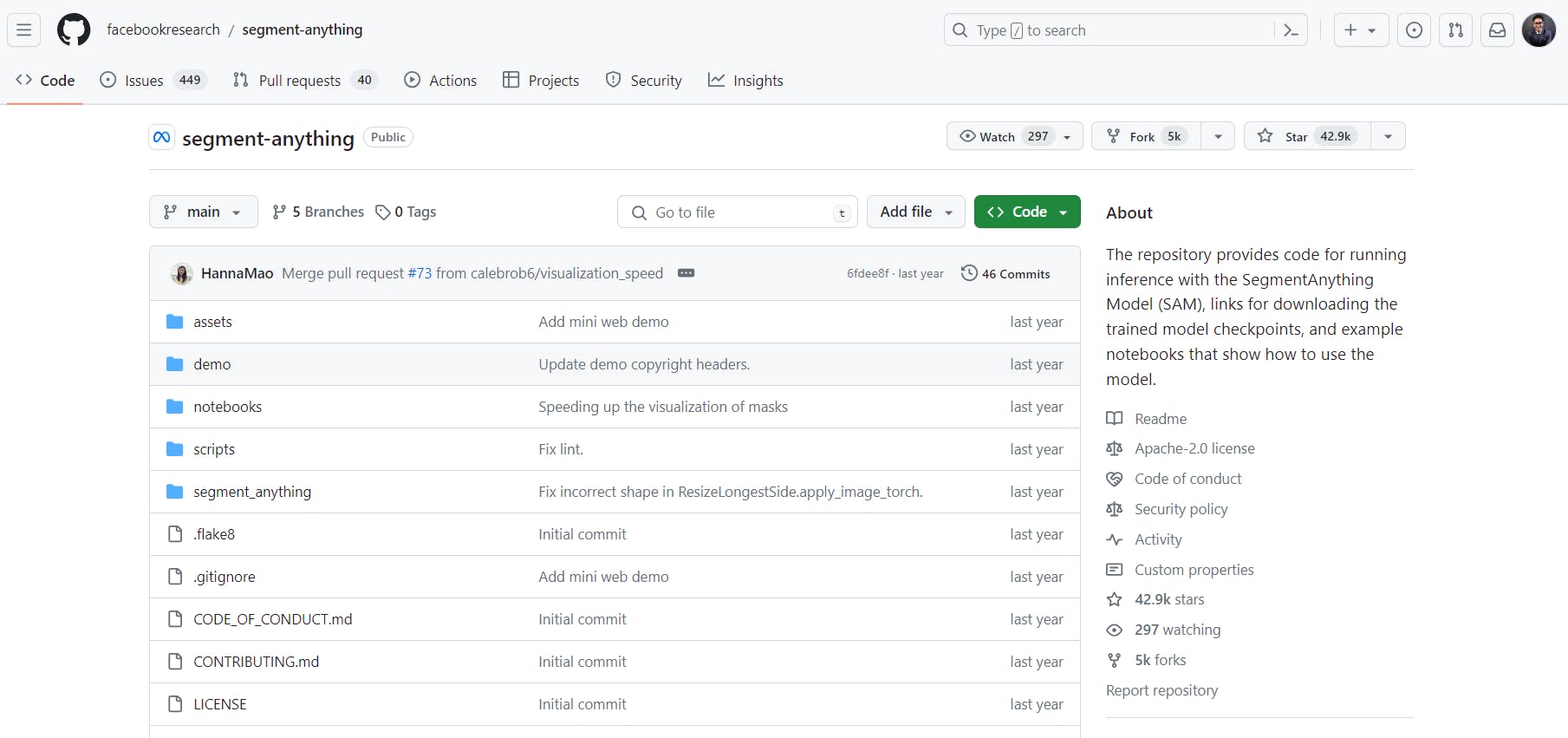

#2 SegmentAnything Model (SAM)

segment-anything is maintained by Meta AI. The Segment Anything Model (SAM) is designed to produce high-quality object masks from input prompts such as points or boxes. Trained on an extensive dataset of 11 million images and 1.1 billion masks, SAM exhibits strong zero-shot performance on various segmentation tasks.

Repository Format

The ReadMe.md file clearly mentions guides for installing these and running the model from prompts. Running SAM from this repo requires Python 3.8 or higher, PyTorch 1.7 or higher, and TorchVision 0.8 or higher.

Repository Content

The segment-anything repository provides code, links, datasets, etc. for running inference with the SegmentAnything Model (SAM). Here’s a concise summary of the content in the segment-anything repository:

This repository provides:

- Code for running inference with SAM.

- Links to download trained model checkpoints.

- Downloadable dataset of images and masks used to train the model.

- Example notebooks demonstrating SAM usage.

- Lightweight mask decoder is exportable to the ONNX format for specialized environments.

Key Learnings

Some of the key learnings one can gain from the segment-anything repository are:

- Understanding Object Segmentation:

Learn about object segmentation techniques and how to generate high-quality masks for objects in images. Explore using input prompts (such as points or boxes) to guide mask generation. - Practical Usage of SAM:

Install and use Segment Anything Model (SAM) for zero-shot segmentation tasks. Explore provided example notebooks to apply SAM to real-world images. - Advanced Techniques:

For more experienced users, explore exporting SAM’s lightweight mask decoder to ONNX format for specialized environments.

Proficiency Level

The Segment Anything Model (SAM) is accessible to users with intermediate to advanced Python, PyTorch, and TorchVision proficiency. Here’s a concise breakdown for users of different proficiency levels:

- Beginner | Install and Run: If you’re new to SAM, follow installation instructions, download a model checkpoint, and use the provided code snippets to generate masks from input prompts or entire images.

- Intermediate | Explore Notebooks: Dive into example notebooks to understand advanced usage, experiment with prompts, and explore SAM’s capabilities.

- Advanced | ONNX Export: For advanced users, consider exporting SAM’s lightweight mask decoder to ONNX format for specialized environments supporting ONNX runtime.

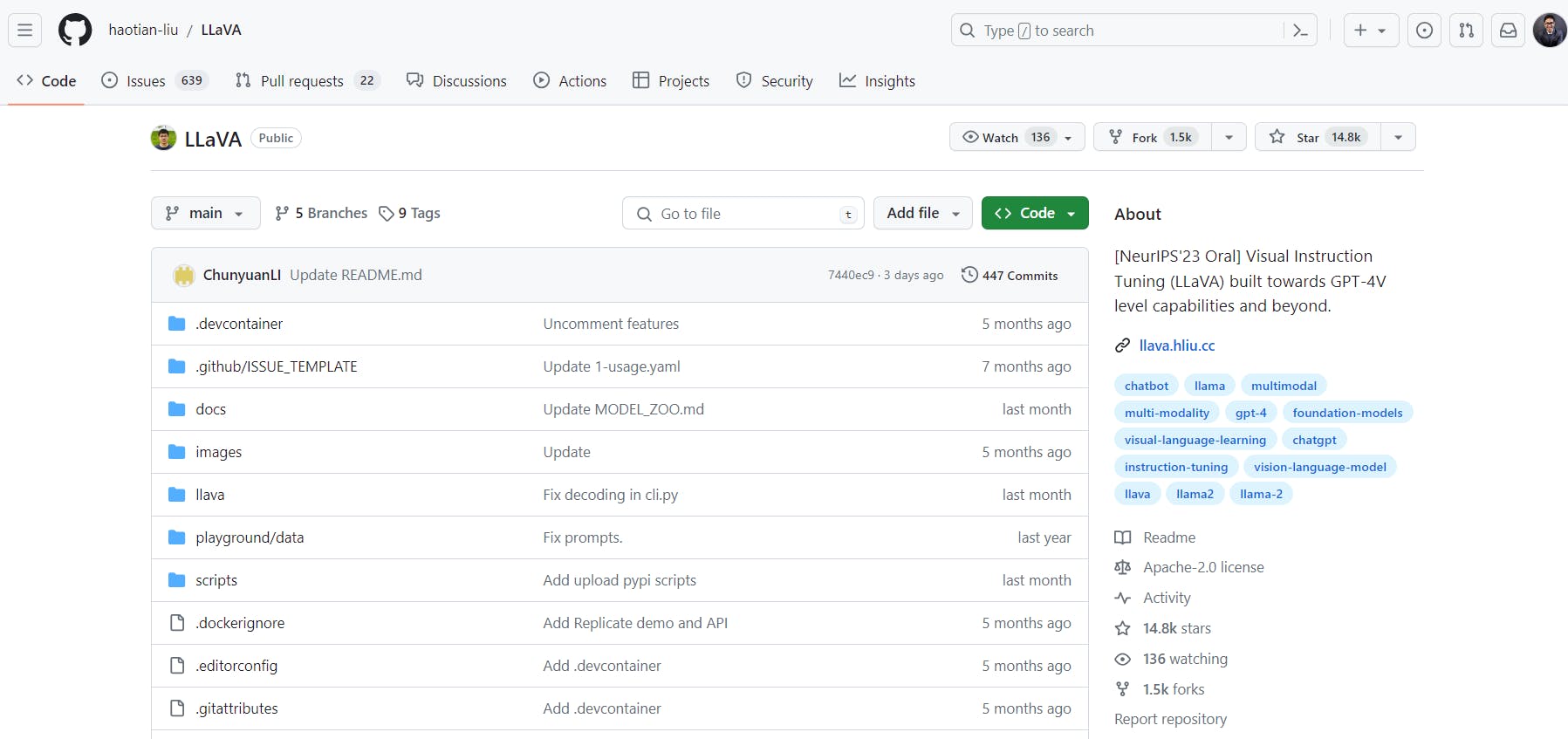

#3 Visual Instruction Tuning (LLaVA) Repository

The LLaVA (Large Language and Vision Assistant) repository, developed by Haotian Liu, focuses on Visual Instruction Tuning. It aims to enhance large language and vision models, reaching capabilities comparable to GPT-4V and beyond.

LLaVA demonstrates impressive multimodal chat abilities, sometimes even exhibiting behaviors similar to multimodal GPT-4 on unseen images and instructions. The project has seen several releases with unique features and applications, including LLaVA-NeXT, LLaVA-Plus, and LLaVA-Interactive.

Visual Instruction Tuning (LLaVA)

Repository Format

The content in the LLaVA repository is primarily Python-based. The repository contains code, models, and other resources related to Visual Instruction Tuning. The Python files (*.py) are used to implement, train, and evaluate the models. Additionally, there may be other formats, such as Markdown for documentation, JSON for configuration files, and text files for logs or instructions.

Repository Content

LLaVA is a project focusing on visual instruction tuning for large language and vision models with GPT-4 level capabilities. The repository contains the following:

- LLaVA-NeXT: The latest release, LLaVA-NeXT (LLaVA-1.6), has additional scaling to LLaVA-1.5 and outperforms Gemini Pro on some benchmarks. It can now process 4x more pixels and perform more tasks/applications.

- LLaVA-Plus: This version of LLaVA can plug and learn to use skills.

- LLaVA-Interactive: This release allows for an all-in-one demo for Image Chat, Segmentation, and Generation.

- LLaVA-1.5: This version of LLaVA achieved state-of-the-art results on 11 benchmarks, with simple modifications to the original LLaVA.

- Reinforcement Learning from Human Feedback (RLHF): LLaVA has been improved with RLHF to improve fact grounding and reduce hallucination.

Key Learnings

The LLaVA repository offers valuable insights in the domain of Visual Instruction Tuning. Some key takeaways include:

- Enhancing Multimodal Models: LLaVA focuses on improving large language and vision models to achieve capabilities comparable to GPT-4V and beyond.

- Impressive Multimodal Chat Abilities: LLaVA demonstrates remarkable performance, even on unseen images and instructions, showcasing its potential for multimodal tasks.

- Release Variants: The project has seen several releases, including LLaVA-NeXT, LLaVA-Plus, and LLaVA-Interactive, each introducing unique features and applications.

Proficiency Level

- Catered towards intermediate and advanced levels Computer Vision engineers building vision-language applications.

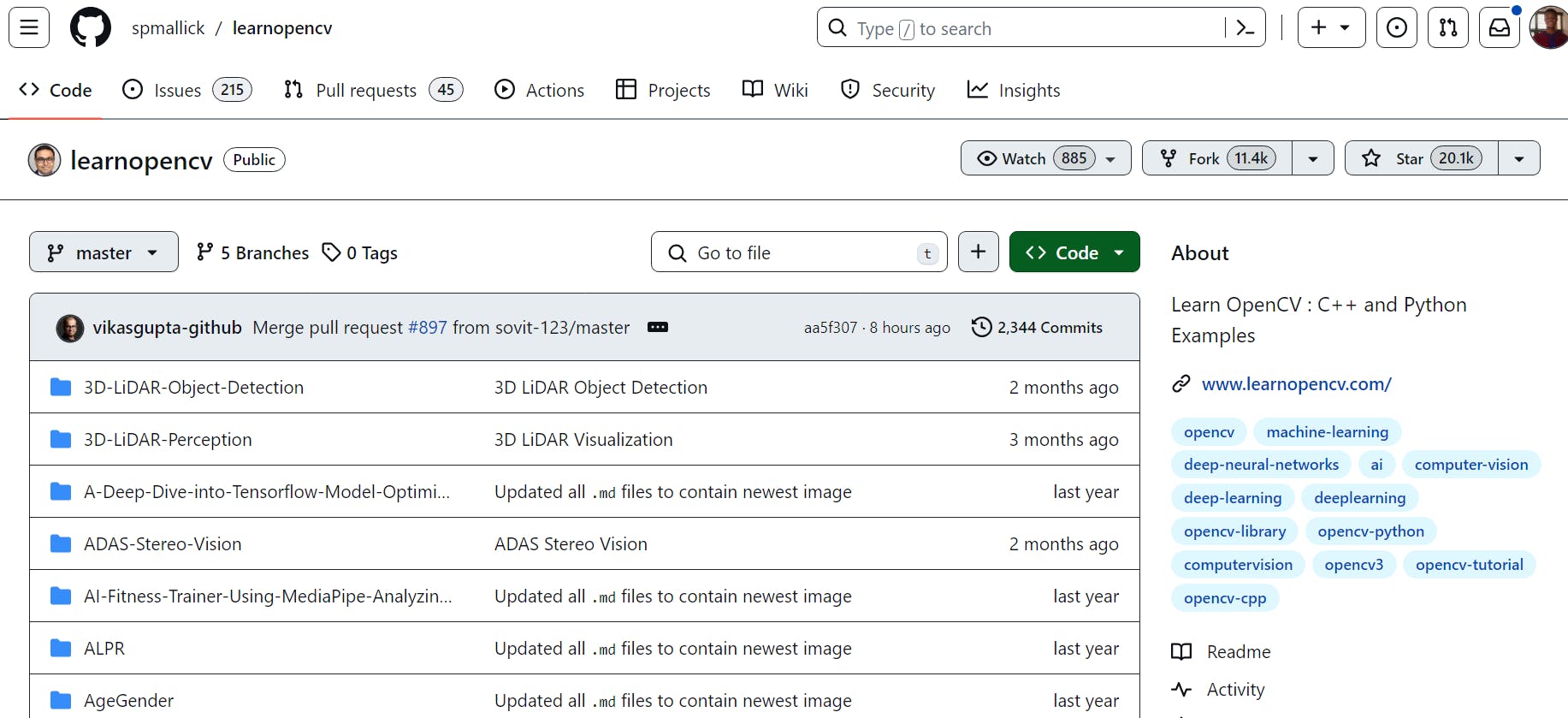

#4 LearnOpenCV

Satya Mallick maintains a repository on GitHub called LearnOpenCV. It contains a collection of C++ and Python codes related to Computer Vision, Deep Learning, and Artificial Intelligence. These codes are examples for articles shared on the LearnOpenCV.com blog.

Resource Format

The resource format of the repository includes code for the articles and blogs. Whether you prefer hands-on coding or reading in-depth explanations, this repository has diverse resources to cater to your learning style.

Repository Contents

This repo contains code for Computer Vision, deep learning, and AI articles shared in OpenCV’s blogs, LearnOpenCV.com. You can choose the format that best suits your learning style and interests.

Here are some popular topics from the LearnOpenCV repository:

- Face Detection and Recognition: Learn how to detect and recognize faces in images and videos using OpenCV and deep learning techniques.

- Object Tracking: Explore methods for tracking objects across video frames, such as using the Mean-Shift algorithm or correlation-based tracking.

- Image Stitching: Discover how to combine multiple images to create panoramic views or mosaics.

- Camera Calibration: Understand camera calibration techniques to correct lens distortion and obtain accurate measurements from images with OpenCV.

- Deep Learning Models: Use pre-trained deep learning models for tasks like image classification, object detection, and semantic segmentation.

- Augmented Reality (AR): Learn to overlay virtual objects onto real-world scenes using techniques such as marker-based AR.

These examples provide practical insights into Computer Vision and AI, making them valuable resources for anyone interested in these fields!

Key Learnings

- Apply OpenCV techniques confidently across varied industry contexts.

- Undertake hands-on projects using OpenCV that solidify your skills and theoretical understanding, preparing you for real-world Computer Vision challenges.

Proficiency Level

This repo caters to a wide audience:

- Beginner: Gain your footing in Computer Vision and AI with introductory blogs and simple projects.

- Intermediate: Elevate your understanding with more complex algorithms and applications.

- Advanced: Challenge yourself with cutting-edge research implementations and in-depth blog posts.

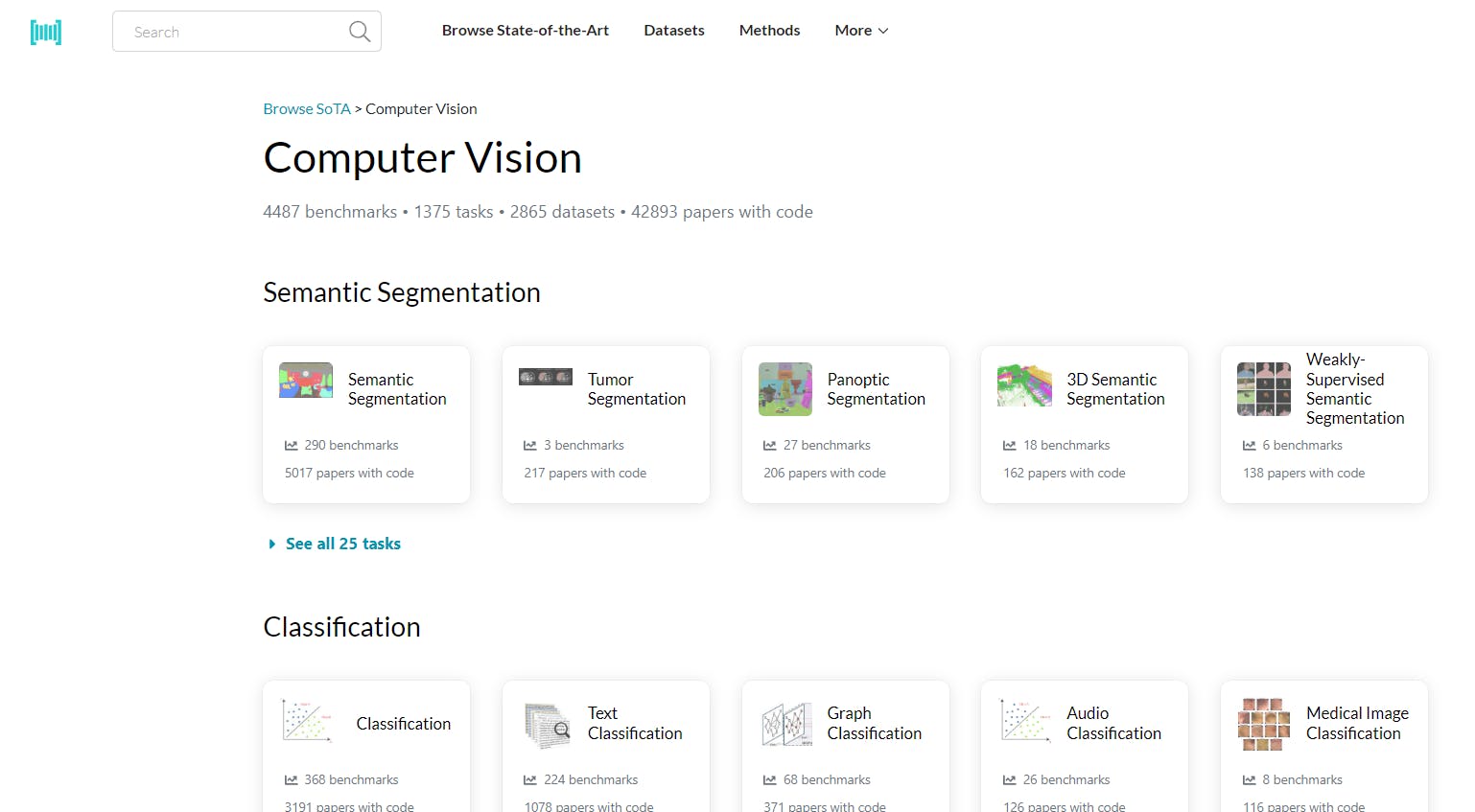

#5 Papers with Code

Researchers from Meta AI are responsible for maintaining Papers with Code as a community project. No data is shared with any Meta Platforms product.

Repository Format

The repository provides a wide range of Computer Vision research papers in various formats, such as:

- ResNet: A powerful convolutional neural network architecture with 2052 papers with code.

- Vision Transformer: Leveraging self-attention mechanisms, this model has 1229 papers with code.

- VGG: The classic VGG architecture boasts 478 papers with code.

- DenseNet: Known for its dense connectivity, it has 385 papers with code.

- VGG-16: A variant of VGG, it appears in 352 papers with code.

Repository Contents

This repository contains Datasets, Research Papers with Codes, Tasks, and all the Computer Vision-related research material on almost every segment and aspect of CV like The contents are segregated in the form of classified lists as follows:

- State-of-the-Art Benchmarks: The repository provides access to a whopping 4,443 benchmarks related to Computer Vision. These benchmarks serve as performance standards for various tasks and models.

- Diverse Tasks: With 1,364 tasks, Papers With Code covers a wide spectrum of Computer Vision challenges. Whether you’re looking for image classification, object tracking, or depth estimation, you'll find it here.

- Rich Dataset Collection: Explore 2,842 datasets curated for Computer Vision research. These datasets fuel advancements in ML and allow researchers to evaluate their models effectively.

- Massive Paper Repository: The platform hosts an impressive collection of 42,212 papers with codes. These papers contribute to cutting-edge research in Computer Vision.

Key Learnings

Here are some key learnings from the Computer Vision on Papers With Code:

- Semantic Segmentation: This task involves segmenting an image into regions corresponding to different object classes. There are 287 benchmarks and 4,977 papers with codes related to semantic segmentation.

- Object Detection: Object detection aims to locate and classify objects within an image. The section covers 333 benchmarks and 3,561 papers with code related to this task.

- Image Classification: Image classification involves assigning a label to an entire image. It features 464 benchmarks and 3,642 papers with code.

- Representation Learning: This area focuses on learning useful representations from data. There are 15 benchmarks and 3,542 papers with code related to representation learning.

- Reinforcement Learning (RL): While not specific to Computer Vision, there is 1 benchmark and 3,826 papers with code related to RL.

- Image Generation: This task involves creating new images. It includes 221 benchmarks and 1,824 papers with code.

These insights provide a glimpse into the diverse research landscape within Computer Vision. Researchers can explore the repository to stay updated on the latest advancements and contribute to the field.

Proficiency Levels

A solid understanding of Computer Vision concepts and familiarity with machine learning and deep learning techniques are essential to make the best use of the Computer Vision section on Papers With Code. Here are the recommended proficiency levels:

- Intermediate: Proficient in Python, understanding of neural networks, can read research papers, and explore datasets.

- Advanced: Strong programming skills, deep knowledge, ability to contribute to research, and ability to stay updated.

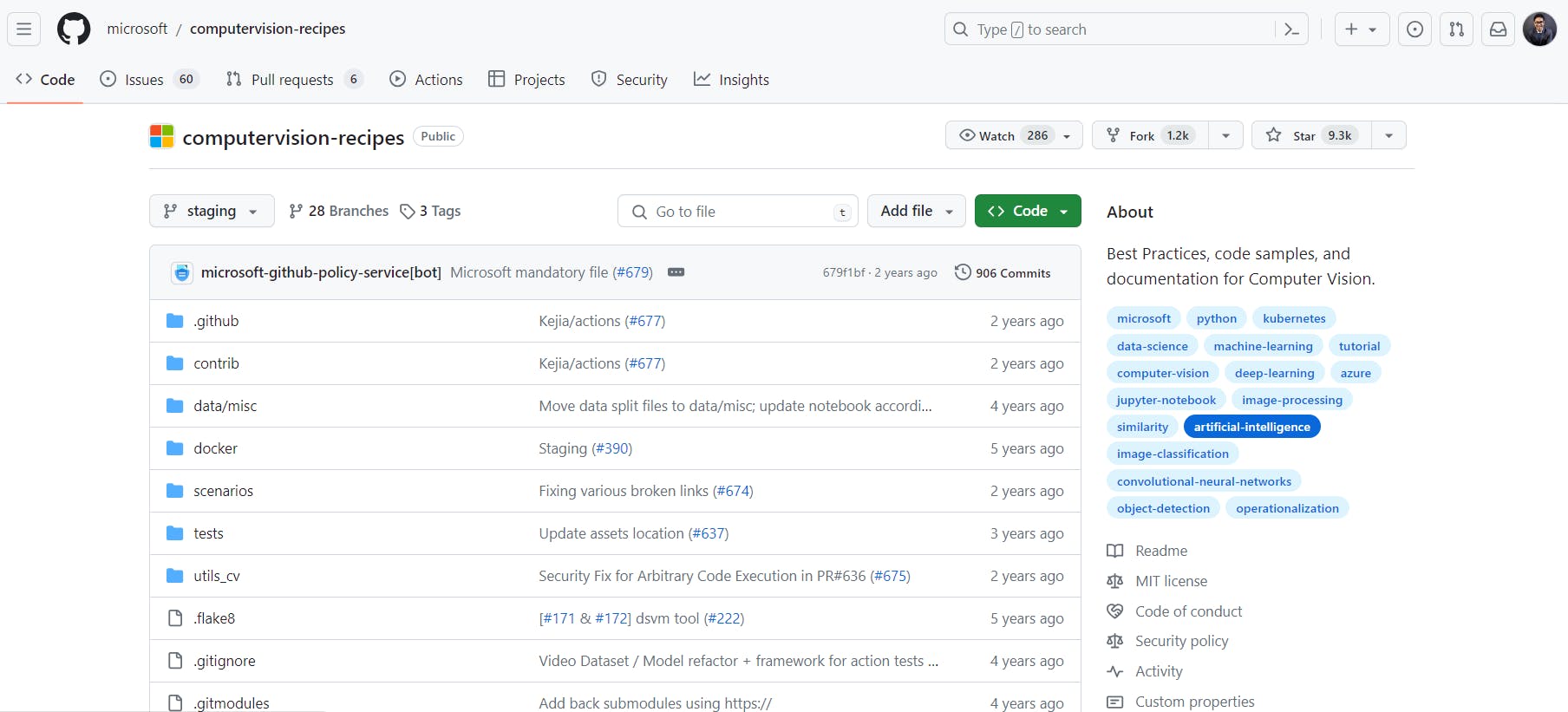

#6 Microsoft / ComputerVision-Recipes

The Microsoft GitHub organization hosts various open-source projects and samples across various domains. Among the many repositories hosted by Microsoft, the Computer Vision Recipes repository is a valuable resource for developers and enthusiasts interested in using Computer Vision technologies.

Repository Format

One key strength of Microsoft’s Computer Vision Recipes repository is its focus on simplicity and usability. The recipes are well-documented and include detailed explanations, code snippets, and sample outputs.

- Languages: The recipes are a range of programming languages, primarily Python (with some Jupyter Notebook examples), C#, C++, TypeScript, and JavaScript so that developers can use the language of their choice.

- Operating Systems: Additionally, the recipes are compatible with various operating systems, including Windows, Linux, and macOS.

Repository Content

- Guidelines: The repository includes guidelines and recommendations for implementing Computer Vision solutions effectively.

- Code Samples: You’ll find practical code snippets and examples covering a wide range of Computer Vision tasks.

- Documentation: Detailed explanations, tutorials, and documentation accompany the code samples.

- Supported Scenarios:

- Image Tagging: Assigning relevant tags to images.

- Face Recognition: Identifying and verifying faces in images.

- OCR (Optical Character Recognition): Extracting text from images.

- Video Analytics: Analyzing videos for objects, motion, and events.

- Highlights| Multi-Object Tracking:

Added state-of-the-art support for multi-object tracking based on the FairMOT approach described in the 2020 paper “A Simple Baseline for Multi-Object Tracking." .

Key Learnings

The Computer Vision Recipes repository from Microsoft offers valuable insights and practical knowledge in computer vision. Here are some key learnings you can expect:

- Best Practices: The repository provides examples and guidelines for building computer vision systems using best practices. You’ll learn about efficient data preprocessing, model selection, and evaluation techniques.

- Task-Specific Implementations: This section covers a variety of computer vision tasks, such as image classification, object detection, and image similarity. By studying these implementations, you’ll better understand how to approach real-world vision problems.

- Deep Learning with PyTorch: The recipes leverage PyTorch, a popular deep learning library. You’ll learn how to create and train neural networks for vision tasks and explore architectures and techniques specific to computer vision.

Proficiency Level

The Computer Vision Recipes repository caters to a wide range of proficiency levels, from beginners to experienced practitioners. Whether you’re just starting in computer vision or looking to enhance your existing knowledge, this repository provides practical examples and insights that can benefit anyone interested in building robust computer vision systems.

#7 Awesome-Deep-Vision

The Awesome Deep Vision repository, curated by Jiwon Kim, Heesoo Myeong, Myungsub Choi, Jung Kwon Lee, and Taeksoo Kim, is a comprehensive collection of deep learning resources designed specifically for Computer Vision.

This repository offers a well-organized collection of research papers, frameworks, tutorials, and other useful materials relating to Computer Vision and deep learning.

Awesome-Deep-Vision Repository

Repository Format

The Awesome Deep Vision repository organizes its resources in a curated list format. The list includes various categories related to Computer Vision and deep learning, such as research papers, courses, books, videos, software, frameworks, applications, tutorials, and blogs. The repository is a valuable resource for anyone interested in advancing their knowledge in this field.

Repository Content

Here’s a closer look at the content and their sub-sections of the Awesome Deep Vision repository:

- Papers: This section includes seminal research papers related to Computer Vision. Notable topics covered include:

- ImageNet Classification: Papers like Alex Krizhevsky, Ilya Sutskever, and Geoffrey E. Hinton’s work on image classification using deep convolutional neural networks.

- Object Detection: Research on real-time object detection, including Faster R-CNN and PVANET.

- Low-Level Vision: Papers on edge detection, semantic segmentation, and visual attention.

- Other resources are Computer Vision course lists, books, video lectures, frameworks, applications, tutorials, and insightful blog posts.

Key Learnings

The Awesome Deep Vision repository offers several valuable learnings for those interested in Computer Vision and deep learning:

- Stay Updated: The repository provides a curated list of research papers, frameworks, and tutorials. By exploring these resources, you can stay informed about the latest advancements in Computer Vision.

- Explore Frameworks: Discover various deep learning frameworks and libraries. Understanding their features and capabilities can enhance your ability to work with Computer Vision models.

- Learn from Research Papers: Dive into research papers related to Computer Vision. These papers often introduce novel techniques, architectures, and approaches. Studying them can broaden your knowledge and inspire your work.

- Community Collaboration: The repository is a collaborative effort by multiple contributors. Engaging with the community and sharing insights can lead to valuable discussions and learning opportunities.

While the repository doesn’t directly provide model implementations, it is a valuable reference point for anyone passionate about advancing their Computer Vision and deep learning skills.

Proficiency Level

The proficiency levels that this repository caters to are:

- Intermediate: Proficiency in Python programming and awareness of deep learning frameworks.

- Advanced: In-depth knowledge of CV principles, mastery of frameworks, and ability to contribute to the community.

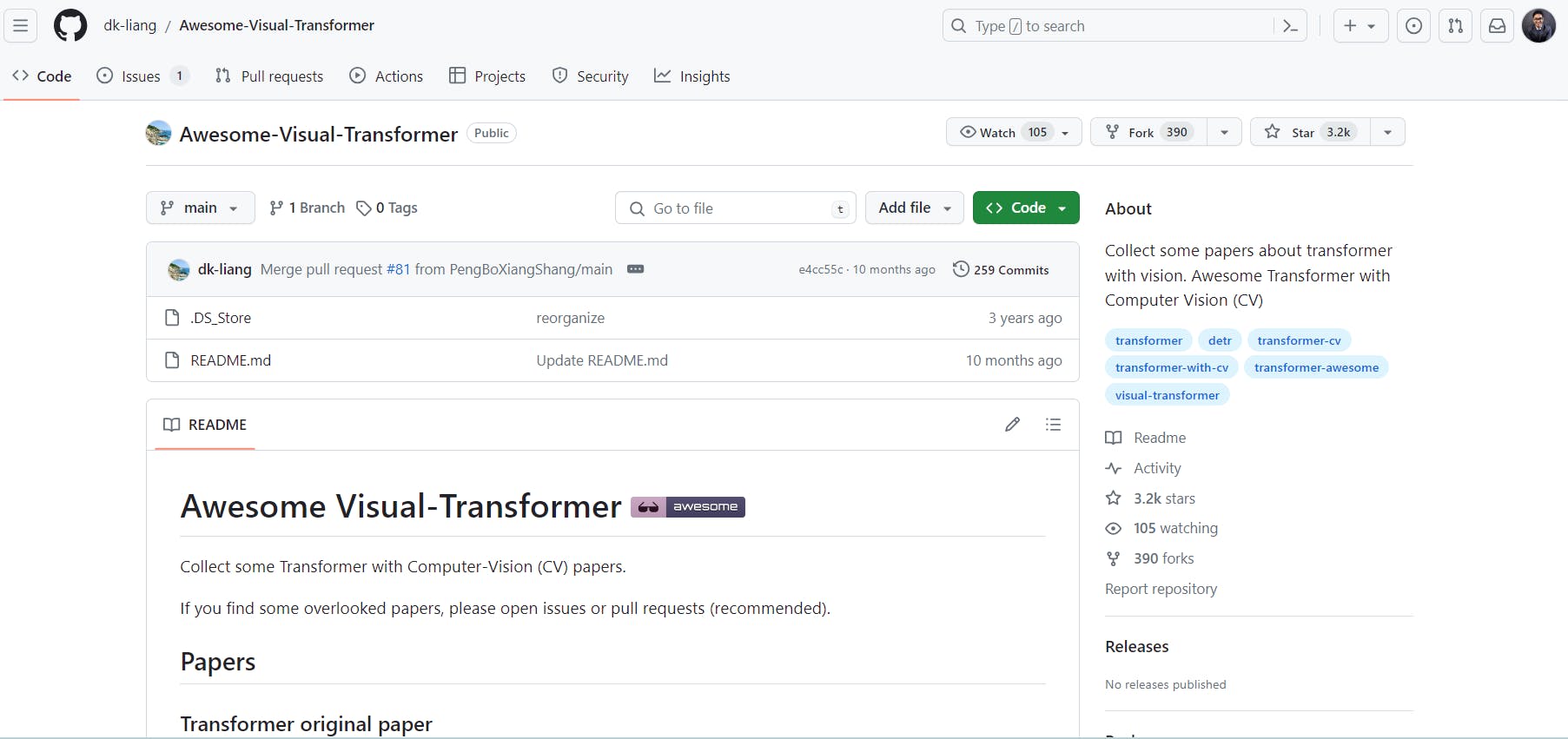

#8 Awesome Transformer with Computer Vision (CV)

The Awesome Visual Transformer repository is a curated collection of articles and resources on transformer models in Computer Vision (CV), maintained by dk-liang.

The repository is a valuable resource for anyone interested in the intersection of visual transformers and Computer Vision (CV).

Awesome-visual-transformer Repository

Repository Format

This repository (Awesome Transformer with Computer Vision (CV)) is a collection of research papers about transformers with vision. It contains surveys, arXiv papers, papers with codes on CVPR, and papers on many other subjects related to Computer Vision. It does not contain any coding.

Repository Content

This is a valuable resource for anyone interested in transformer models within the context of Computer Vision (CV). Here’s a brief overview of its content:

- Papers: The repository collects research papers related to visual transformers. Notable papers include:

- “Transformers in Vision”: A technical blog discussing vision transformers.

- “Multimodal learning with transformers: A survey”: An IEEE TPAMI paper.

- ArXiv Papers: The repository includes various arXiv papers, such as:

- “Understanding Gaussian Attention Bias of Vision Transformers”

- “TAG: Boosting Text-VQA via Text-aware Visual Question-answer Generation”

- Transformer for Classification:

- Visual Transformer Stand-Alone Self-Attention in Vision Models: Designed for image recognition, by Ramachandran et al. in 2019.

- Transformers for Image Recognition at Scale: Dosovitskiy et al. explore transformers for large-scale image recognition in 2021. - Other Topics: The repository covers task-aware active learning, robustness against adversarial attacks, and person re-identification using locally aware transformers.

Key Learnings

Here are some key learnings from the Awesome Visual Transformer repository:

- Understanding Visual Transformers: The repository provides a comprehensive overview of visual transformers, including their architecture, attention mechanisms, and applications in Computer Vision. You’ll learn how transformers differ from traditional convolutional neural networks (CNNs) and their advantages.

- Research Papers and Surveys: Explore curated research papers and surveys on visual transformers. These cover topics like self-attention, positional encodings, and transformer-based models for image classification, object detection, and segmentation.

- Practical Implementations: The repository includes practical implementations of visual transformers. Studying these code examples will give you insights into how to build and fine-tune transformer-based models for specific vision tasks.

Proficiency Level

- Aimed at Computer Vision researchers and engineers with a practical understanding of the foundational concepts of transformers.

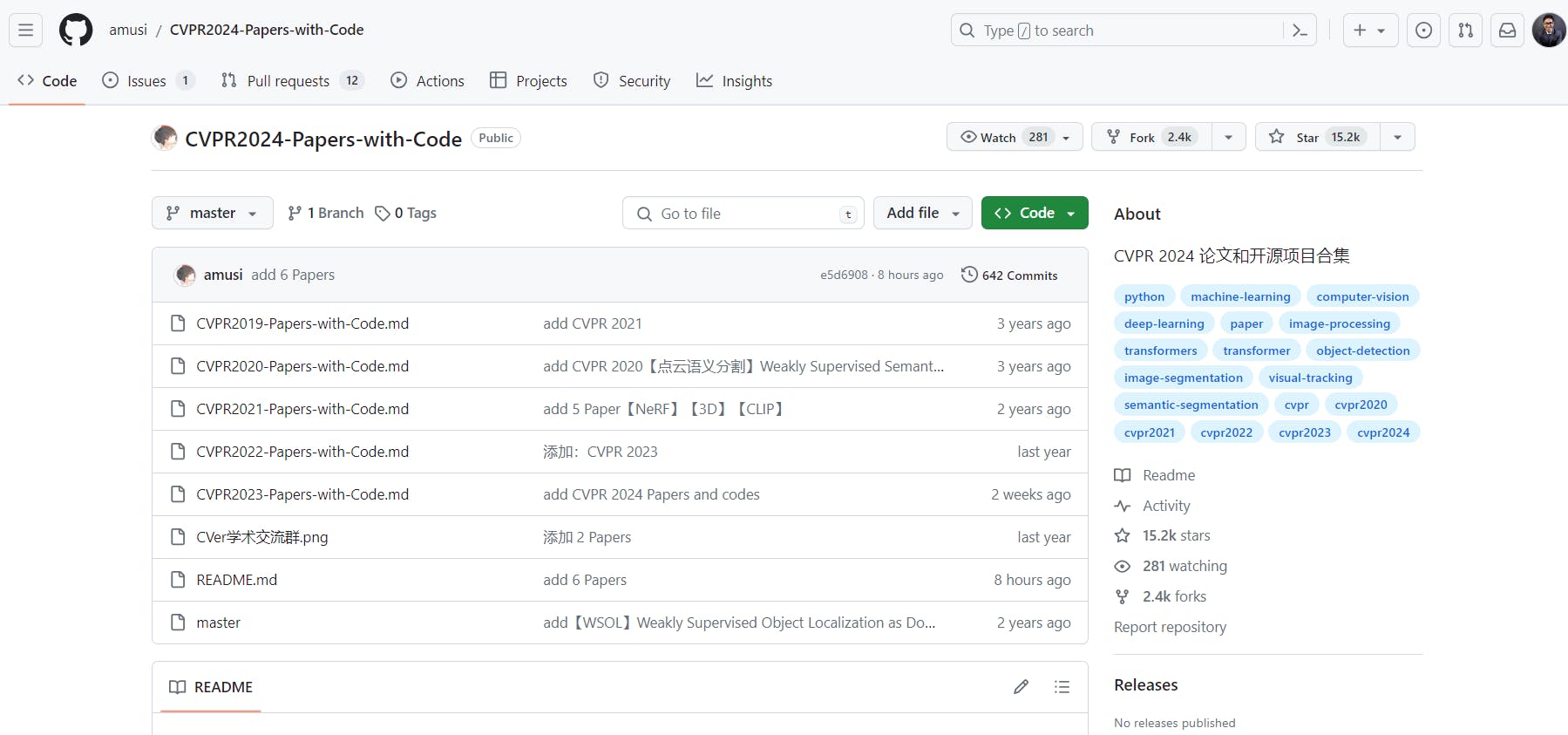

#9 Papers-with-Code: CVPR 2023 Repository

The CVPR2024-Papers-with-Code repository, maintained by Amusi, is a comprehensive collection of research papers and associated open-source projects related to Computer Vision. It covers many topics, including machine learning, deep learning, image processing, and specific areas like object detection, image segmentation, and visual tracking.

CVPR2024 Papers with Code Repository

Repository Format

The repository is an extensive collection of research papers and relevant codes organized according to different topics, including machine learning, deep learning, image processing, and specific areas like object detection, image segmentation, and visual tracking.

Repository Content

- CVPR 2023 Papers: The repository contains a collection of papers presented at the CVPR 2023 conference. This year (2023), the conference received a record 9,155 submissions, a 12% increase over CVPR 2022, and accepted 2,360 papers for a 25.78% acceptance rate.

- Open-Source Projects: Along with the papers, the repository also includes links to the corresponding open-source projects.

- Organized by Topics: The papers and projects in the repository are organized by various topics such as Backbone, CLIP, MAE, GAN, OCR, Diffusion Models, Vision Transformer, Vision-Language, Self-supervised Learning, Data Augmentation, Object Detection, Visual Tracking, and numerous other related topics.

- Past Conferences: The repository also contains links to papers and projects from past CVPR conferences.

Key Learnings

Here are some key takeaways from the repository:

- Cutting-Edge Research: The repository provides access to the latest research papers presented at CVPR 2024. Researchers can explore novel techniques, algorithms, and approaches in Computer Vision.

- Practical Implementations: The associated open-source code allows practitioners to experiment with and implement state-of-the-art methods alongside research papers. This practical aspect bridges the gap between theory and application.

- Diverse Topics: The repository covers many topics, including machine learning, deep learning, image processing, and specific areas like object detection, image segmentation, and visual tracking. This diversity enables users to delve into various aspects of Computer Vision.

In short, the repository is a valuable resource for staying informed about advancements in Computer Vision and gaining theoretical knowledge and practical skills.

Proficiency Level

- While beginners may find the content challenging, readers with a solid foundation in Computer Vision can benefit significantly from this repository's theoretical insights and practical implementations.

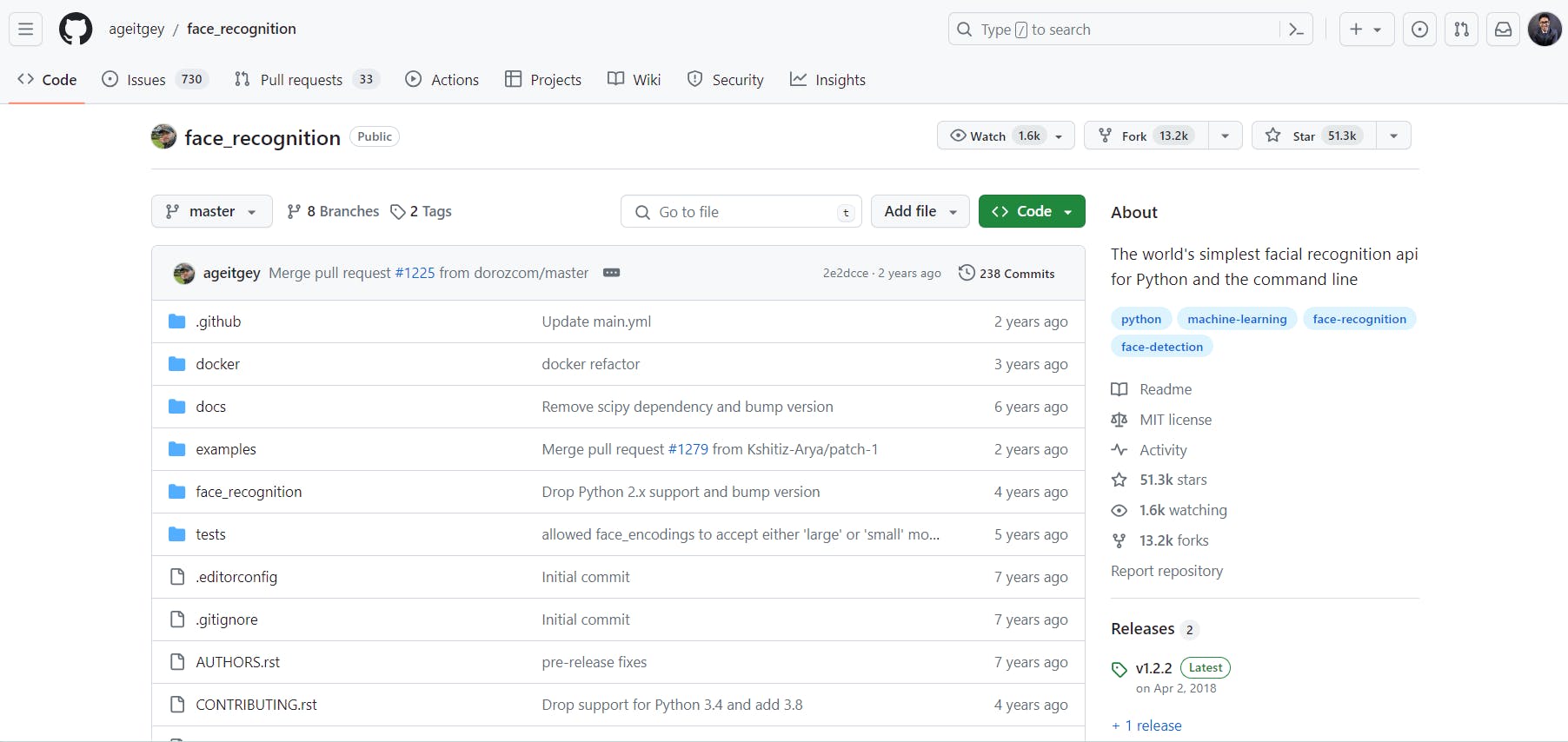

#10 Face Recognition

This repository on GitHub provides a simple and powerful facial recognition API for Python. It lets you recognize and manipulate faces from Python code or the command line.

Built using dlib’s state-of-the-art face recognition, this library achieves an impressive 99.38% accuracy on the Labeled Faces in the Wild benchmark.

Repository Format

The content of the face_recognition repository on GitHub is primarily in Python. It provides a simple and powerful facial recognition API that allows you to recognize and manipulate faces from Python code or the command line. You can use this library to find faces in pictures, identify facial features, and even perform real-time face recognition with other Python libraries.

Repository Content

Here’s a concise list of the content within the face_recognition repository:

- Python Code Files:

The repository contains Python code files that implement various facial recognition functionalities. These files include functions for finding faces in pictures, manipulating facial features, and performing face identification. - Example Snippets:

The repository provides example code snippets demonstrating how to use the library. These snippets cover tasks such as locating faces in images and comparing face encodings. - Dependencies:

The library relies on the dlib library for its deep learning-based face recognition. To use this library, you need to have Python 3.3+ (or Python 2.7), macOS or Linux, and dlib with Python bindings installed.

Key Learnings

Some of the key learnings from the face_recognition repository are:

- Facial Recognition in Python:

It provides functions for locating faces in images, manipulating facial features, and identifying individuals. - Deep Learning with dlib:

You can benefit from the state-of-the-art face recognition model within dlib. - Real-World Applications:

By exploring the code and examples, you can understand how facial recognition can be applied in real-world scenarios. Applications include security, user authentication, and personalized experiences. - Practical Usage:

The repository offers practical code snippets that you can integrate into your projects. It’s a valuable resource for anyone interested in using facial data in Python.

Proficiency Level

- Caters to users with a moderate-to-advanced proficiency level in Python. It provides practical tools and examples for facial recognition, making it suitable for those who are comfortable with Python programming and want to explore face-related tasks.

Key Takeaways

Open-source Computer Vision tools and resources greatly benefit researchers and developers in the CV field. The contributions from these repositories advance Computer Vision knowledge and capabilities.

Here are the highlights of this article:

- Benefits of Code, Research Papers, and Applications: Code, research papers, and applications are important sources of knowledge and understanding. Code provides instructions for computers and devices, research papers offer insights and analysis, and applications are practical tools that users interact with.

- Wide Range of Topics: Computer Vision encompasses various tasks related to understanding and interpreting visual information, including image classification, object detection, facial recognition, and semantic segmentation. It finds applications in image search, self-driving cars, medical diagnosis, and other fields.

Frequently asked questions

Some good open source libraries for Computer Vision include OpenCV, Awesome Computer Vision, LearnOpenCV, Papers with Code, Microsoft CV Recipes on GitHub, Visual Transformer, Segment Anything by Facebook on GitHub, and many more. These libraries offer a wide range of tools and resources for developing Computer Vision applications and conducting research in the field. Additionally, exploring GitHub repositories dedicated to Computer Vision projects can also provide valuable insights and resources for developers looking to contribute to the community.

Yes, several free data repositories are available for Computer Vision and image understanding projects, such as ImageNet, COCO, and CIFAR-10. These repositories provide large datasets that can be used for training and testing Computer Vision algorithms and models. Platforms like Kaggle also offer access to various datasets and competitions related to Computer Vision tasks.

One way to quickly search for classic papers in your area of interest is to use academic search engines like Google Scholar or IEEE Xplore. These platforms allow you to filter search results by relevance, publication date, and citations, making finding influential papers in the field easier. Additionally, joining online communities or forums related to Computer Vision and image understanding can also help you discover recommended readings and resources from experts in the field.

OpenCV is a popular project on GitHub for image processing and Computer Vision. It offers a wide range of tools and libraries for various applications. Another interesting project is TensorFlow's Object Detection API, which provides pre-trained models for object detection tasks in images and videos.

Encord provides a comprehensive annotation platform that can enhance home security technologies by enabling accurate object detection and alert systems. This integration helps improve functionalities like package notifications and property outlines, ensuring homeowners are informed about activities on their property.

Encord provides robust project management capabilities that facilitate data sharing, metadata handling, and collaborative workflows. With features designed to support annotation tasks, users can efficiently manage their projects and reduce duplication of efforts, ensuring a seamless integration of data pipelines.

Encord specializes in data operations, providing tools for machine learning and computer vision teams to manage their annotation and validation workflows effectively. The platform supports human-curated and annotated data processes, allowing teams to optimize their pipelines for better efficiency.

Encord offers a robust platform designed to streamline the management of computer vision pipelines. Key features include user management, collaboration tools, and the ability to curate and annotate datasets efficiently, making it easier for teams to work together and enhance their workflows.

Encord offers a robust suite of annotation tools specifically designed for computer vision tasks, such as image classification and object detection. These tools streamline the annotation process, allowing teams to efficiently manage their datasets and ensure high-quality annotations.

Encord offers robust tools specifically designed for computer vision tasks, enabling users to efficiently annotate and manage large datasets. With features tailored for various annotation types, Encord enhances the productivity and accuracy of machine learning workflows.

Encord allows users to leverage open-source models for object detection, making it easier to fine-tune models with minimal data. The platform offers the flexibility to adapt these models for specific applications, enhancing their performance in real-world scenarios.

Encord is designed to cater to computer vision teams by offering advanced features for image and video annotation. This includes automated tools for object detection, segmentation, and tracking, allowing teams to efficiently prepare data for training models.

Encord can help scale computer vision projects by integrating with enhanced compute resources, such as GPUs, which are essential for processing larger datasets and running more complex models efficiently.