Why High-Stakes AI Lives or Dies on Data Quality: Encord x Mantyx Webinar Recap

Technical Writer at Encord

If you work in surgical AI, autonomous driving, or physical AI, you know data is no longer an afterthought.

Encord's Diarmuid (Customer Engineering Lead) sat down with Thibault Sys, Surgical Data Science Engineer at Mantyx, a spin-off of Orsi Academy, one of the world's largest robotic surgery training and innovation centers, to dig into what it actually takes to deploy AI in environments where failure is not an option.

They also dove into cross-industry lessons that are not only applicable in surgical robotics, but also autonomous vehicles, defence, and why the right training data is critical in these cases.

The Context: What Mantyx Does and Why It Matters

Mantyx was born out of Orsi Academy's innovation department (Orsi Innotech), which has been building AI models for surgical contexts for years. The result is a surgical co-pilot, an AI system designed to assist during live surgical procedures. Thibault is responsible for all of the annotation processes that make those models possible. That means he lives and breathes data pipelines and specifically the catastrophic consequences of getting them wrong. As Thibault put it: "In logistics settings, if a box is placed somewhere in an incorrect spot, the consequences are limited. But if you start annotating every vein as a urethra... then you want your data to be very well annotated."

This is exactly the kind of customer Encord exists for.

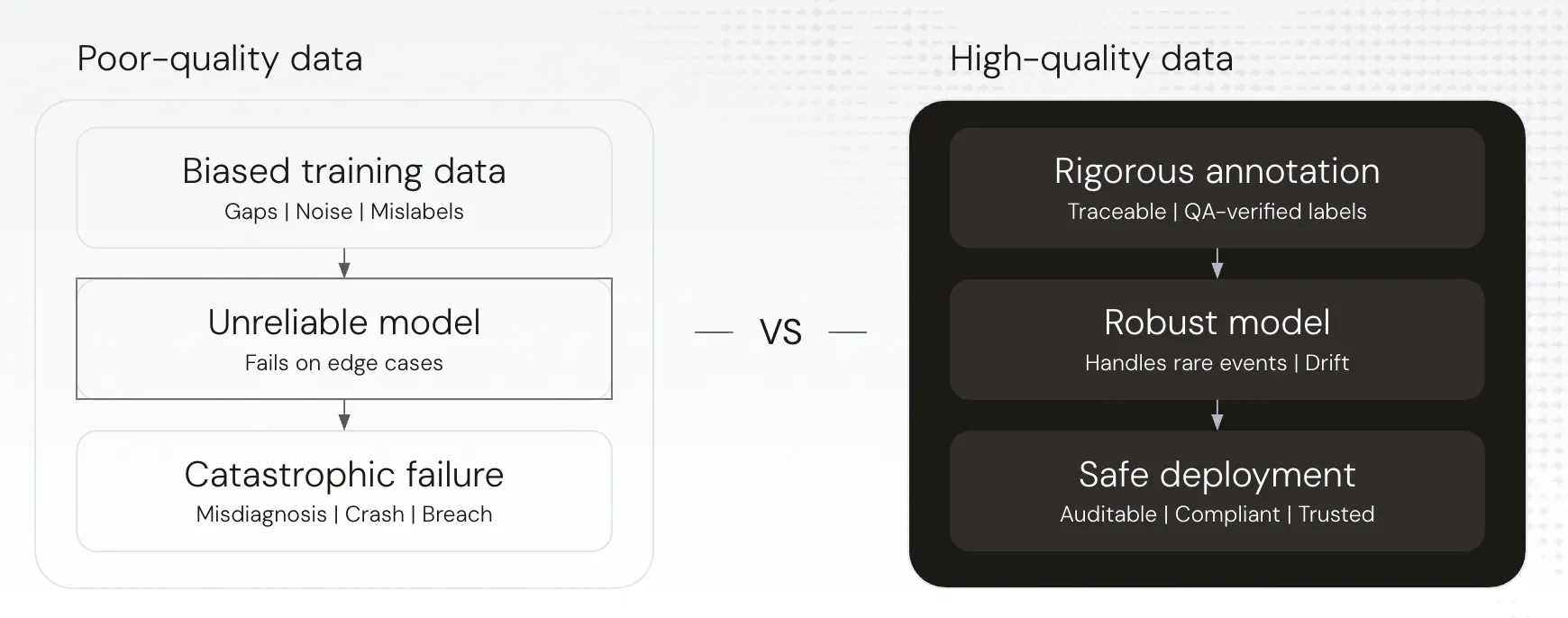

The Problem: Data Quality Is the Moat Nobody Talks About

To hone in on the point that data is critical in high-stakes deployment, Diarmuid gave the following example: autonomous driving companies in the early days tried to train their models on synthetic environments (GTA-style simulated worlds0. The thinking was logical. Cheaper, faster, safer to collect. What happened? Failure in production. The synthetic data just wasn't representative of the real world, and when the models hit edge cases, like blizzards, blackouts, lane reversals, they fell apart.

-Thibault Sys, Mantyx

If you're operating autonomous vehicles primarily in California or the Southwest US and you suddenly encounter Boston in February, your model has never seen two-foot snow banks. The vehicles didn't know how to respond.

What does Encord let you do about this? Load all of your fleet data, run a similarity or natural language search query to surface every scenario involving snow or blizzard-like conditions, send that data directly to annotation, and re-train. The next time a blizzard hits? You're ready.

Thibault offered the surgical equivalent. A researcher he met at a conference had trained a surgical phase-recognition model with very high performance metrics. When Thibault asked him to run inference on Mantyx's videos, it failed completely.

The reason? The training data had been built entirely on right-handed surgeons. Mantyx had video of left-handed surgeons. The instruments were on the opposite sides. The model had never seen it, and it completely broke down. "Your data distribution has to be very, very broad," Thibo said. "That is the conclusion."

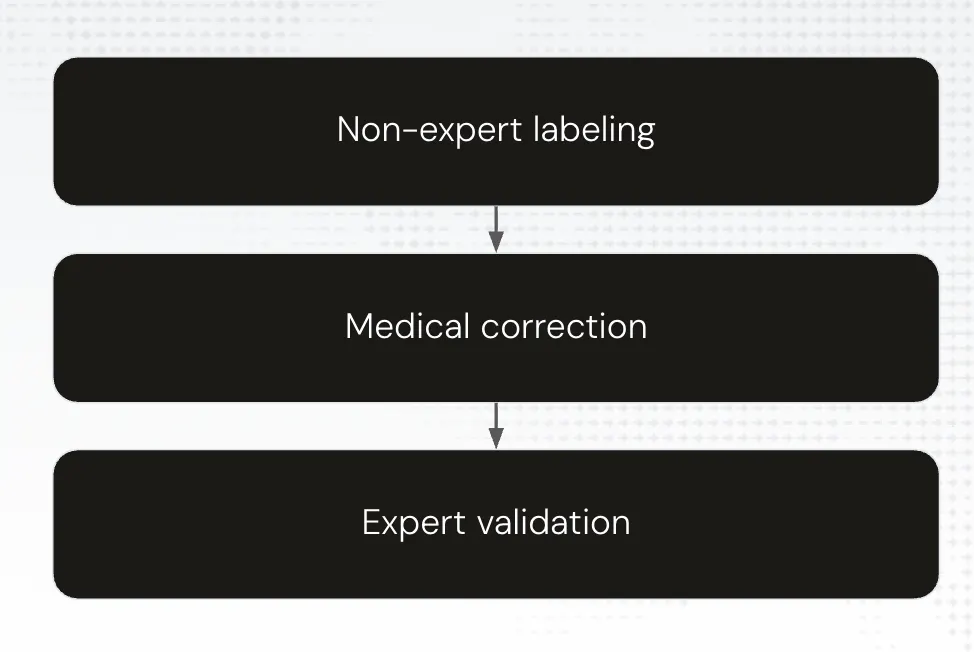

Mantyx's Multi-Stage Validation Pipeline

The real meat is in the way Mantyx has architected their annotation pipeline using Encord.

Stage 1: Initial Annotation (Third-Party Annotators)

The first pass is done by annotators who may have little to no medical background. They're tasked with drawing contours around structures in surgical video. outlining organs like the liver, identifying instruments, creating the first-pass masks. Historically, this was the most time-intensive stage because every contour had to be drawn manually.

Encord has changed this significantly. The integration of SAM 2 (Meta's Segment Anything Model 2) into the platform and, SAM 3 within a single day of its release, means annotators can now prompt the system rather than manually trace every structure. The model suggests contours; annotators guide and correct. What used to require hours of manual contouring is now dramatically accelerated.

And then there's the Encord Agent Catalog. The agent catalog is a marketplace of pre-built, pre-configured AI agents deployable directly within Encord. For Mantyx, this means being able to further automate first-stage annotation, where the system proactively suggests or automates annotations rather than waiting for a human to prompt it.

"As a result of this feature that was very quickly implemented," Thibo noted, "we were able to significantly reduce the time spent on the first stage, the labor-intensive stage of the validation pipeline."

Stage 2: Medical Correction

Annotations from stage one are reviewed by individuals with genuine medical knowledge such as medical students, residents, fellows. They correct and refine the first-pass annotations, and they provide feedback that gets sent back to the stage-one annotators. This is a live feedback loop, not a one-way street. Encord allows annotations or specific images or videos to be sent back with structured feedback. Stage one learns from stage two. Over time, this continuous learning improves both the speed and the quality of initial annotations.

Stage 3: Expert Validation

The final stage is the surgeons. They provide the definitive review before any annotation is marked complete. Every single annotation has at least three sets of eyes on it before it's ingested into a model. This is the non-negotiable part of any high-stakes deployment: the human expert has the final word, and that won't change for the foreseeable future.

The pipeline ensures that annotation quality compounds over time, not just because experts catch errors, but because the feedback loops actively improve earlier-stage annotators and because the data distribution gets broader with every edge case surfaced.

Cross-Industry Lessons: Why Surgical AI, Autonomous Driving, and Physical AI Have More in Common Than You Think

There is a great amount of overlap between these seemingly distinct domains. Diarmuid walked through the autonomous driving case, specifically the challenge of expanding from right-hand-drive markets (US, continental Europe) to left-hand-drive markets (UK). The inward-facing cabin cameras, trained to expect distracted driving behaviour in the centre console area, suddenly miss the same behaviour happening next to the window. A human immediately knows that's distracted driving. The model doesn't because the spatial distribution of the behaviour has shifted.

The surgical equivalent? Right-handed vs. left-handed surgeons. Same problem, different anatomy.

The lesson isn't just interesting, but it's also actionable. Domain shift is the governing constraint on production AI in any of these industries. The fix is always the same: identify the gap, find or collect the data, annotate it properly, and retrain. .

Diarmuid also touched on the governance piece which critically relevant for medical teams especially. GDPR compliance, HIPAA compliance, data residency, on-prem deployment options, restricting Encord from ever accessing the raw data so that only your own annotators and client teams can see it. SOC 2. The compliance stack is built in.

What's Coming: The Roadmap

The Encord Agent Catalog

The model-assisted labelling paradigm that started with SAM is now expanding to cover OCR, audio diarization and transcription, image description (with GPT-4o, Claude, and others), and your own fine-tuned or proprietary models. The goal is to embed AI assistance at every stage of the labelling workflow, so that every hour a human annotator spends is spent on the hardest decisions.

Similarity and Natural Language Search for Data Curation

The ability to query your entire data lake for edge cases, in natural language, or by similarity, is one of the most powerful things Encord does. As fleets grow, as surgical video libraries scale, as robot training datasets expand, the bottleneck stops being annotation and starts being curation. Finding the data that matters. Encord's search and curation tools sit at that inflection point.

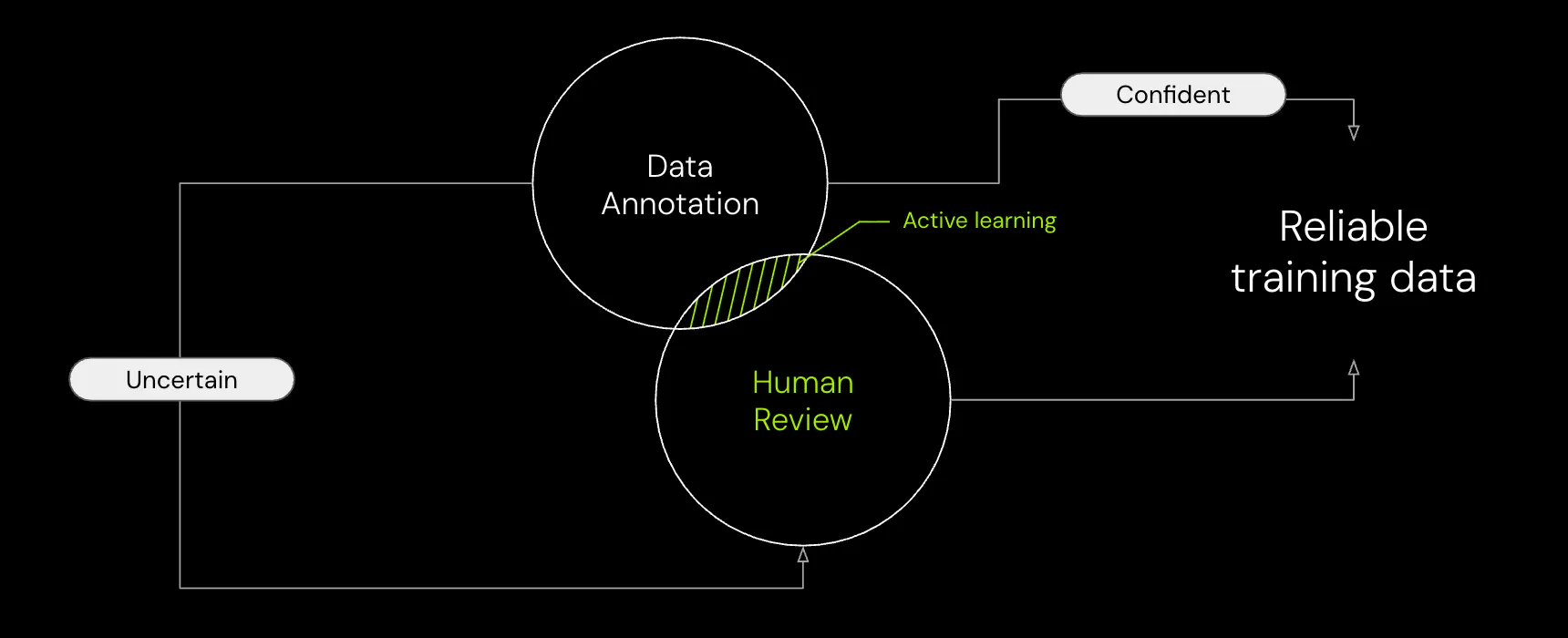

Human-in-the-Loop at Runtime

Thibault's vision for autonomous surgery is a useful north star: the robot handles the reproducible parts; when it reaches a genuinely difficult moment, it calls the surgeon. That vision requires both excellent training data and robust runtime human-in-the-loop infrastructure. Encord's covers both sides of that equation: the data pipeline that trains the model and the active learning loop that keeps improving it after deployment.

-Thibault Sys, Mantyx

The Bottom Line

High-stakes AI is not a model problem. The model is only as safe, and as good, as the data behind it, from a safety perspective and an execution perspective. You need to cover every single use case, and you need a way to identify and handle new edge cases as they appear. That's what Encord is built for.

If you're building AI for surgery, autonomous vehicles, physical robots, radiology, or any other domain where failure has real consequences, the data layer is where the work happens.