Refine AI training data with human-in-the-loop workflows

Improve the quality of your model outputs by generating high-quality preference data for RLHF, DPO, and model evaluation, using Encord’s suite of alignment, labeling, and collaboration tools.

“State-of-the-art models require highly sophisticated infrastructure. Encord Index is a high-performance system for our AI data, enabling us to sort and search at any level of complexity.”

Victor Riparbelli

Co-Founder and CEO at Synthesia

Build human-verified data workflows for RLHF and model evaluation

Differentiate your model performance

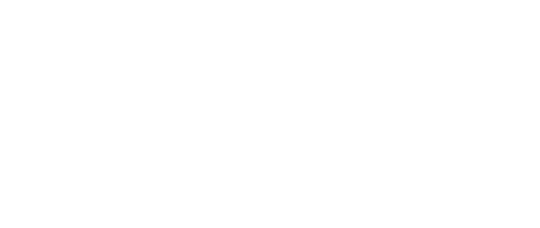

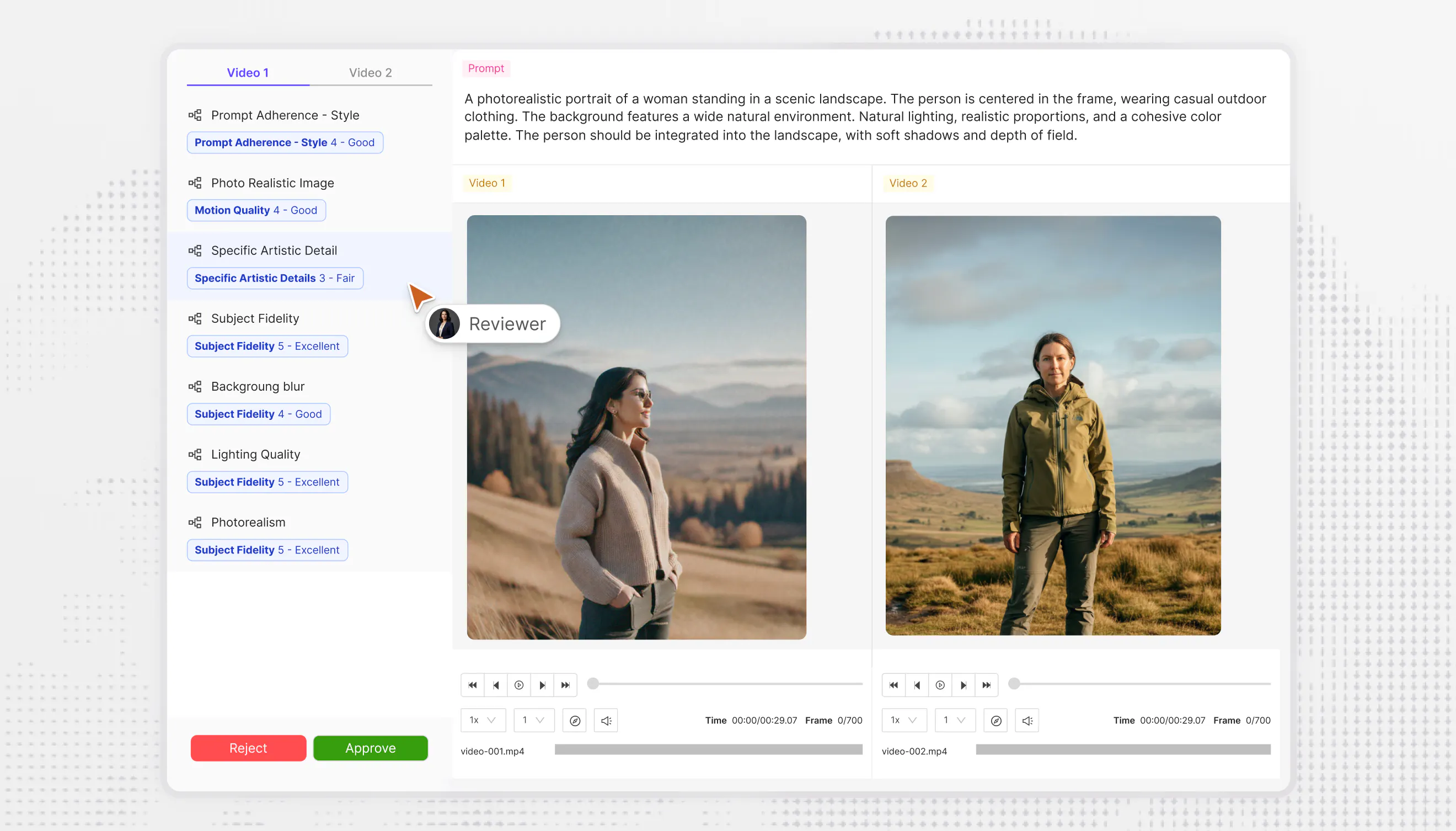

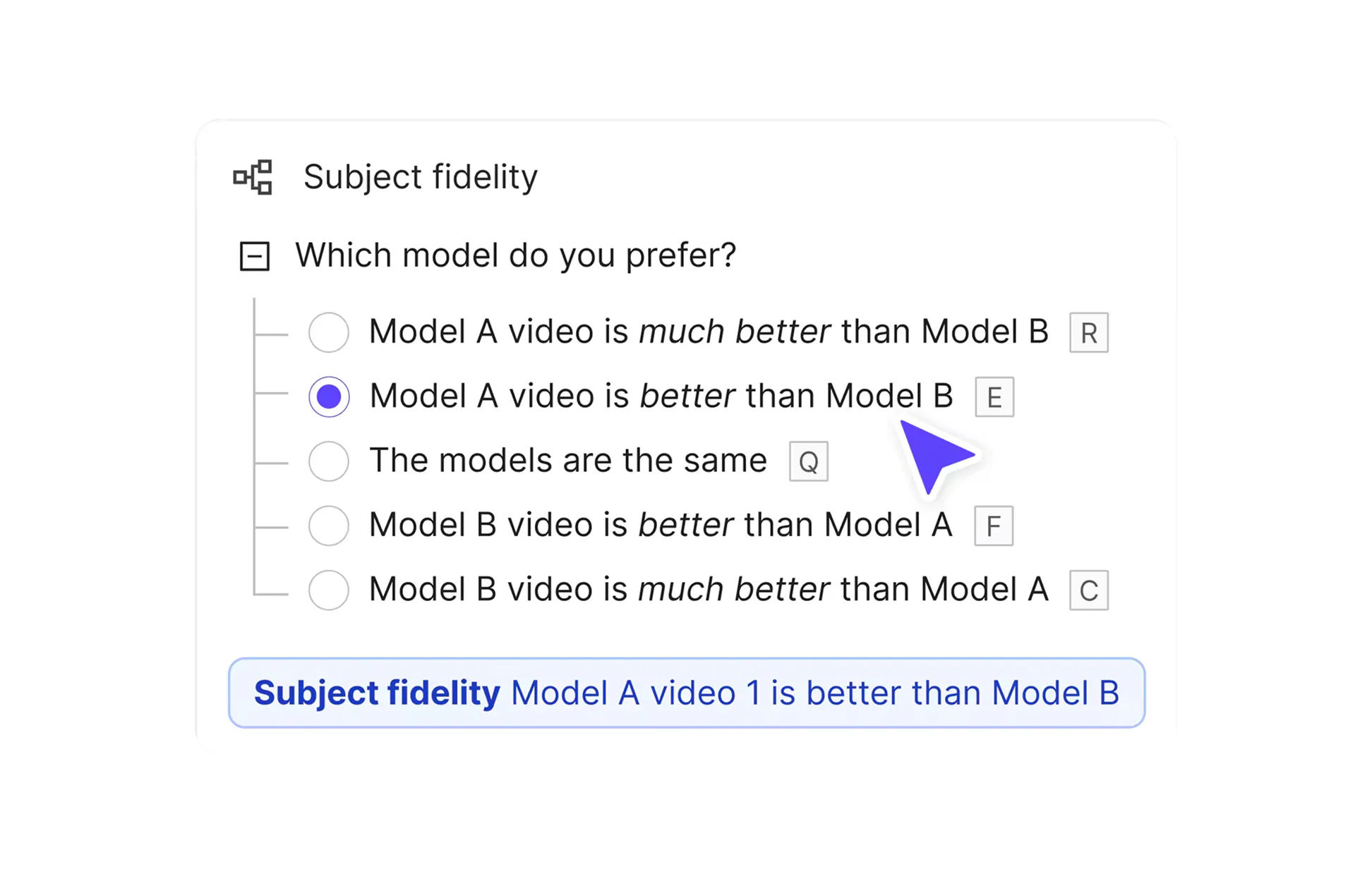

Generate high-quality preference data by configuring data evaluation and alignment workflows.

Design and implement evaluation workflows

Incorporate RLHF, DPO and other post-training workflows into your training process with Encord's suite of evaluation tools.

You're in good company

Encord is used by 300+ frontier AI teams to deploy production-ready AI models.

Enterprise-grade.

Built for scale.

Designed for reliable AI.

Built for scale.

Designed for reliable AI.

API/SDK-first. Zero data migration. Your data stays in your cloud.

Visit trust center

Get the data right

300+ of the best AI teams in the world use Encord. Join them.