GET READY FOR CVPR

What do you need to know about CVPR?

With the rapid maturation of 3D Gaussian Splatting (3DGS) and Mamba-based neural rendering, the computer vision community is moving beyond simple image recognition toward building "world models" that understand the physics, geometry, and semantics of 3D space.

However, a technical crisis is brewing. While our models are becoming 3D-aware, datasets remain stubbornly 2D. This perspective gap is the single greatest hurdle for engineers trying to achieve SOTA (State-of-the-Art) results in 2026.

If you’re heading to the Denver Convention Center in Colorado for CVPR between June 3 - June 7, 2026, you’ll notice that this year’s Conference on Computer Vision and Pattern Recognition is defined by one word: grounding.

Researchers are no longer satisfied with a model that can draw a 2D bounding box around a car, they want a model that understands the car's volume, its distance from the sensor, and how its appearance will shift as a camera moves around it.

The "Perspective Gap": Why 2D Labels are Obsolete

For an AI Engineer, the math is simple: a 2D label is a projection that discards the Z-axis. When we train 3D-aware models like SAM 3 or Gaussian-based reconstruction pipelines on 2D images, we are forcing the model to guess the underlying geometry. This creates three primary engineering challenges:

Multi-view inconsistency

If you label a pedestrian in Frame A and Frame B independently, the 2D boxes will never perfectly align in 3D space. This misalignment prevents neural renderers from achieving high-fidelity reconstruction.

Occlusion blindness

2D labels only account for what is visible. In 3D scene reconstruction, the model needs to understand what is behind an object to maintain a consistent world state.

The depth ambiguity

Without Z-axis grounding, models struggle with scale ambiguity, where a small object close to the camera looks identical to a large object far away.

Solving the grounding crisis with 3D-consistent annotation

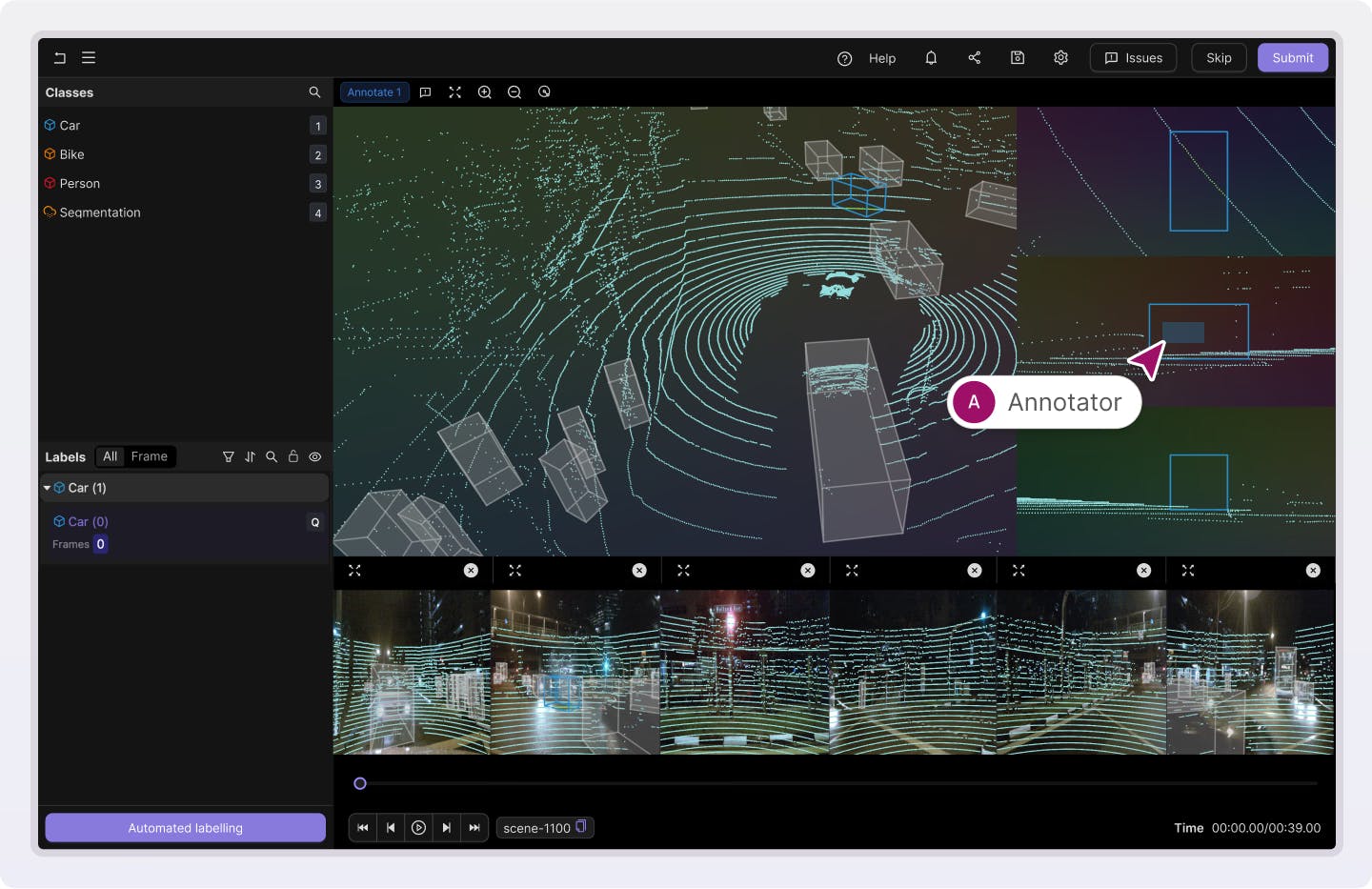

To win at Conference on Computer Vision and Pattern Recognition 2026, engineers are moving away from frame-by-frame 2D labeling toward 3D-Consistent Annotation. This means annotating once in 3D space (using LiDAR or multi-view geometry) and projecting those labels back into every 2D camera view.

The most successful papers this year are leveraging multimodal sensor fusion. By combining RGB camera data with high-precision LiDAR point clouds, engineers can create “ground truth" that is geometrically perfect.

• Curation: It’s no longer about having 1 million images; it’s about having 10,000 time-synchronized sequences.

• Annotation: Instead of drawing boxes on pixels, engineers are fitting 3D cuboids to point clouds, ensuring that the label remains "locked" to the object's physical coordinates across every possible camera angle.

In conversation with a computer vision data expert: James Clough, VP of Engineering at Encord

To get a better sense of how teams attending CVPR 2026 are navigating the shift from 2D to 3D on the ground, we sat down with James Clough, Encord’s VP of Engineering, to discuss the growing prevalence of data in the research papers at CVPR.

1. CVPR 2026 marks a shift toward '3D grounding' as a primary theme. Why is the transition from 2D object recognition to understanding physical 3D coordinates the most critical hurdle for researchers at the conference this year?

A lot of the computer vision applications people were interested in the past were given an image or a video from a camera, for example, and asking questions like: “can we tell what's going on in that image or video? Can we detect things?” But, now, a lot of the most exciting applications are physical objects like robots and self-driving cars. And because they're not a static camera, they're objects that can move in the real 3D, physical world, they have a new kind of problem. They actually move in the 3D physical world.

That means their perspective on things changes, right? If the car drives past something, then the car needs to understand that it is the car moving, and not all of these other objects around it moving, to be able to correctly predict what's going to happen in the world. So, if you need to not just detect what's in front of you, but you need to know what will happen if you drive forward, you need to have a 3D understanding of the world. You need to know which things are near and which things are far away and which things are moving relative to you and how quickly they're moving.

Similarly for robots, if the robot has arms and hands and is walking around your house and picking things up, it needs to have a 3D understanding of the world. It needs to know that if something becomes temporarily obscured by another object, it hasn't disappeared forever. It's still there, it's just hiding behind that other object. And it can still go and pick it up. It needs to understand that if it falls over, that the entire world hasn’t rotated 90 degrees, it’s that the robot has rotated 90 degrees.

Those are all things that you didn't need to have in lots of traditional computer vision applications, like if you're just trying to understand handwriting for example.The object doing that wasn't moving around in the 3D world.

I think it's really application-driven that better models have unlocked these new applications and these new applications require more complicated understanding of the world and, ultimately, the world is 3D even if cameras take 2D images.

2. The AI community is talking about the collapse of the wall between computer vision and computer graphics at CVPR. How are technologies like Neural Rendering, NeRFs, and Gaussian Splatting changing the way we define 'ground truth' for 2026 models?

I think the gist here basically is that computer graphics typically works by trying to have some sort of model of the world inside your computer. Normal computer graphics, like in a computer game, works by saying “I have this object here, I have this object there, this one's that color”. You calculate where the light is shining and how bright various objects would be from various places. That's how you then show on the user screen what's what's going on.

But there's a new generative AI computer vision approach where, instead of calculating all of that stuff, you just basically have a model that predicts with a neural network things like: “if I was standing here, what kind of thing would I see?” And that used to work really badly because, in the same way that old GenAI models would show people with six fingers and three legs, it wouldn't necessarily be consistent over time.

There are lots of applications where this generative approach is much more efficient than calculating everything, especially in computer graphics areas where you don't really care too much about long-term consistency. Let's say you're trying to display in a video game what the clouds in the sky look like, do you really need to have an actual model of all the clouds or can you just generate some cloud-like things and do that in real time? I think there's lots of new GenAI based computer graphics approaches that are becoming very good.

The other side of things is that people are generating synthetic data to train those models. One way of generating data to train your computer vision model is to go out in the world with a camera and take pictures and annotate things and say, "that's a cat, that's a dog, that's a tree." But the other thing you could do is take those pictures from a video game or another generated world.The advantage of doing this is that the video game knows that's a cat already. It knows that's a dog. It knows that's a tree because it's using that information to generate the graphics in the first place. You don't need to annotate anything if you're generating it from synthetic computer graphics.

There's a merger from both directions. Computer vision GenAI approaches are becoming more of a tool in your computer graphics arsenal. And, at the same time, traditional computer graphics is a great way of generating synthetic data to help train your model. So, these used to be two different worlds and now they're both contributing to each other.

3. A major theme for CVPR 2026 is the 'perspective gap’, where models are 3D-aware but datasets remain 2D. What are the technical implications of 'multi-view inconsistency,' and why is it preventing neural renderers from achieving state of the art results?

Multi-view inconsistency refers to this idea that, if you have two different cameras looking at a scene in the real world, the view from those cameras will be consistent with each other.

For example, if there's two cameras looking at a group of people, then you know that the color of their t-shirts will be the same in both cameras because they're the same people. And, if you're using like traditional computer graphics, that will probably also be true as well because the way traditional computer graphics works is that you have a model of all of the people and the colors of their t-shirts, and you shine the light on them, and you calculate where the light would go, that will end up with something that's consistent. But, if you're using GenAI to generate those views from your cameras, then there's no reason why they necessarily have to be consistent at all.

There might be, again, for the same reason that you could have a hand with six fingers when it's generated by AI, two different views from two AI cameras shots of a scene and they might have inconsistencies between them because there's no real world objects to define what's going on to enforce consistency in the images.

That's kind of the challenge from a data generating perspective Why does that happen? I think, to be honest, it's because making sure that generated and neural renders are consistent is just very, very difficult. It’s something that AI has been traditionally quite bad at. But, there's probably also not a lot of data that can help you there, right?

There are loads and loads of images on the internet that you can train a model on, but how many data sets are there of 10 photographs taken of the same thing from different angles at exactly the same time?

So, training and enforcing consistency using data is quite hard because there aren't many good data sets out there compared to the amount of data there is of just images on the internet.

4. A major theme for CVPR 2026 is the 'perspective gap’, where models are 3D-aware but datasets remain 2D. What are the technical implications of 'multi-view inconsistency,' and why is it preventing neural renderers from achieving state of the art results?

Firstly, getting the data uh in the first place is going to be a lot harder. There are no large data sets on the internet to support this kind of training. There's no YouTube to scrape, there's no billions of images already uploaded. Whatever is publicly available is orders of magnitude smaller and much less diverse than earlier computer vision training data. So, as a first challenge, just getting the same amount of diversity and volume of raw data will be hard.

There's an element of bootstrapping involved, where a few companies have invested a lot of time thinking, decades in fact, and a lot of capital, to have these pipelines that are able to effectively collect 3D data from the world around us. Think about AV companies, think products like Google Earth, Google Maps, those are few and far between. And, in order to bootstrap, you need a system in the wild that is able to collect data. Particularly with the new generation of self-driving and robotics companies, they really need to have a system, or robot, deployed which is able to collect data. But, to get to the point of getting the first cycle to computers is going to be very, very hard based on how hard it is to get the raw data. That's an unsolved problem right now.

Now, let's assume you have a pile of raw data that you’ve managed to acquire, the second problem is that now, to create ground truth, to create the annotations, it's so much harder than in the visual modalities.

There are no off-the-shelf models that are too good.The 3D models that work, work with lower precision than the ones for video and images and run slower too. And then, when it comes to human interaction, with people making the annotations, reviewing them or correcting them, that itself is also an order of magnitude slower and much more resource intensive in terms of work, and it's a lot easier to make mistakes. Just getting a good amount of diverse data and getting that annotated is a much tougher problem than it is for 2D.

However, why are we going through all this pain? The real world is in three dimensions, so if we want a model to understand the world and be able to make predictions within it - as generative models, that's what they do, they make predictions on what's the next word, the next action, what what will happen next in a more general sense - they need to have a more nuanced and detailed understanding.

It’s like Plato's allegory of the cave. The 2D representation is the shadows on the cave wall that people observe, but they're not reality. Reality is 3D. So, if you want models to understand reality, and they can make some pretty good predictions looking at 2D images, but if you can give them 3D information, they'll have something that's more accurate and more real to train on. You get better models.

Over time, we've learned that architecture matters, but data quality matters more over the long term.

As for the trajectory of world model development, I think it will go differently than chips, what is needed to train a transformer model. It's fairly easy on the ground. You need to have a lot of GPUs, which you need for world models as well. But the data is readily available. You can go and scrape the internet for text, images and videos whereas, for 3D data, a world model is going to come from one of the companies that have self driving cars like Waymo or Tesla.

The other case is, if a robotics company is able to generate a flywheel for data collection, but few companies in the field will have the prerequisite data gathering capability data and annotation capabilities.

5. Success at CVPR 2026 is being defined by geometrically consistent data rather than just raw volume. How does the focus on finding missing perspectives and surgical data curation replace the traditional 'more data is better' approach for researchers heading to CVPR?

If you want to train models on problems where there isn't a huge amount of free data available on the internet. That's the case for textual quality data, but it's also the case for multi-view perspective image data of 3D scenes.

If you’ve got very little data, then you can't trust that a large volume of data will necessarily wash out all of the problems you’re experiencing. You need to make sure that the quality is extremely high because any quality problems in your data will be magnified by the fact that there isn't lots of other data to make up for it. So, if you're working in a high quality low volume data regime, which is the case here, then you need to ensure high quality. That's the only lever that you've got. And ensuring high quality means very very meticulous data curation. So, that means actually looking at all of the data in your data set, actually making sure that it's consistent, actually making sure that it has the properties that you want it to have.

Whereas, perhaps in the past, the kind of work researchers would be doing would be churning through huge data sets, now it's actually looking at your 3D scenes, looking at all of these perspectives, making sure that you're not missing anything. That's going to have to replace the “just throw more data at it approach” because you don't have more data to throw at it.

Now, it's always possible to use the synthetic data approach where you have some computer graphic generated worlds that you can use to generate as much data as you like. The challenge there is that the diversity of scenes in those 3D generated worlds is only as much as you program. The real world has got all of the diversity you care about but, unless you explicitly program all of that into your synthetic data, then you're going to end up missing something.

The Encord data engine: building for 3D awareness at CVPR

At Encord, we’ve built our platform to solve the 3D grounding crisis being discussed at CVPR 2026. We believe that to train a world model, you need a data engine that understands the world in 3D.

Native multimodal support

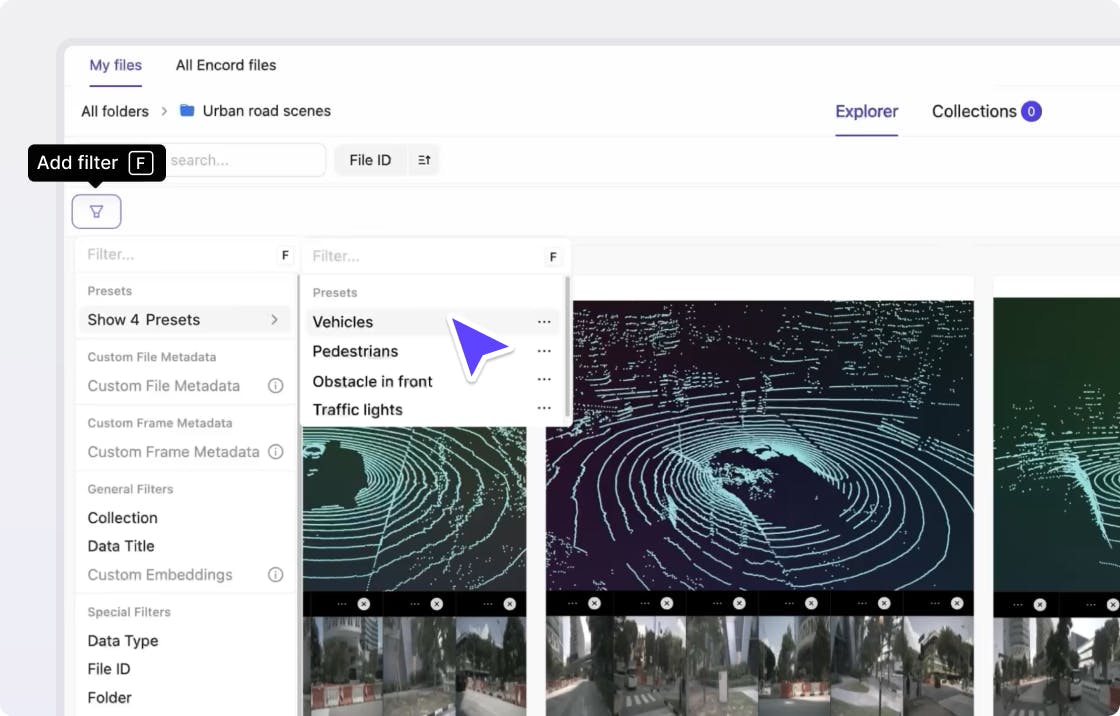

Encord Index allows you to visualize and curate LiDAR + RGB fusion data in a single unified view. You can identify the specific frames where your 3D-to-2D projections drift, allowing for surgical data correction.

3D-to-2D label projection

Stop labeling every frame. Encord platform allows you to annotate a 3D cuboid once and automatically propagate that label across all camera views with sub-pixel precision.

Distributional curation for neural rendering

Use Encord Active to find the "missing perspectives" in your training set. If your Gaussian Splatting model is blurry in a specific corner of a room, Encord can programmatically surface the exact frames needed to fix the reconstruction.

The 3D path to CVPR

The papers that earn oral status at the Conference on Computer Vision and Pattern Recognition 2026 won't be the ones with the most GPUs, they will be the ones with the most geometrically consistent data.

By bridging the perspective gap with a data-centric approach, you move from training detectors to engineering intelligence that understands the 3D world as it truly exists.

Heading to Denver? Schedule a deep dive with our team to see how Encord is powering the next generation of 3D-aware world models.

Frequently asked questions

The IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR) 2026 will be held from June 3 to June 7, 2026, at the Denver Convention Center in Denver, Colorado. Tutorials and workshops are scheduled for the first two days (June 3–4), followed by the main conference tracks.

As the industry moves toward Embodied AI and autonomous systems, the limitations of 2D vision have become a critical bottleneck. "3D Grounding" refers to the ability of a model to relate visual features to their actual physical coordinates in a 3D environment. With the rise of Neural Rendering (NeRFs and Gaussian Splatting), researchers are shifting focus from simply "recognizing" objects to reconstructing entire interactive "World Models."

New for the 2026 cycle, CVPR has introduced a pilot initiative called the Compute Reporting Form. This is a mandatory disclosure where authors report the hardware specifications (GPUs/CPUs) and total compute time used for their research. While it does not affect acceptance decisions, it is designed to increase transparency around the environmental impact and resource accessibility of SOTA computer vision.

Yes, the 3rd Workshop on Synthetic Data for Computer Vision (SynData4CV) is a major highlight this year. It focuses specifically on the "Domain Gap"—the statistical difference between simulated training environments and real-world deployment. If you are struggling with "sim-to-real" transfer for your CVPR project, this workshop provides the latest benchmarks on using generative AI for data augmentation.