The Full Guide to Outsourcing Data Labeling for Machine Learning

Co-Founder & Co-CEO at Encord

Annotation and labeling of raw data — images and videos — for machine learning (ML) models is the most time-consuming and laborious, albeit essential, phase of any computer vision project.

Quality outputs and the accuracy of an annotation team’s work have a direct impact on the performance of any machine learning model, regardless of whether an AI (artificial intelligence) or deep learning algorithm is applied to the imaging datasets.

Organizations across dozens of sectors — healthcare, manufacturing, sports, military, smart city planners, automation, and renewable energy — use machine learning and computer vision models to solve problems, identify patterns, and interpret trends from image and video-based datasets.

Every single computer vision project starts with annotation teams labeling and annotating the raw data; vast quantities of images and videos. Successful annotation outcomes ensure an ML model can ‘learn’ from this training data, solving the problems organizations and ML team leaders set out to solve.

Once the problem and project objectives and goals have been defined, organizations have a not-so-simple choice for the annotation phase: Do we outsource or keep imaging and video dataset annotation in-house?

💡Instead of outsourcing you might consider using Active Learning in your next machine learning project.

💡Instead of outsourcing you might consider using Active Learning in your next machine learning project. In this guide, we seek to answer that question, covering the pros and cons of outsourced video and image data labeling vs. in-house labeling, 7 best practice tips, and what organizations should look for in annotation and machine learning data labeling providers.

Let’s dive in . . .

What is In-House Data Labeling?

In-house data labeling and annotation, as the name suggests, involves recruiting and managing an internal team of dataset annotators and big data specialists. Depending on your sector, this team could be image and video specialists, or professionals in other data annotation fields.

Before you decide, “Yes, this is what we need!”, it’s worth considering the pros and cons of in-house data labeling compared to outsourcing this function.

What Are The Pros and Cons of In-House Annotation?

Pros

- Providing you can source, recruit, train, and retain a team of annotators, annotation managers, and data scientists/quality control professionals, then you’ve got the human resources you need to manage ongoing annotation projects in-house.

- With an in-house team, organizations can benefit from closer monitoring, better quality control, higher levels of data security, and more control over outputs and intellectual property (IP).

- Regulatory compliance, data transfers, and storage are also easier to manage with an in-house data labeling team. Everything stays internal, there’s no need to worry about data getting lost in transit; although, there’s still the risk of data breaches to worry about.

Cons

- On the other hand, recruiting an in-house team can prove prohibitively expensive. Especially if you want the advantage of having that team close, or on-site, alongside ML, data science, and other cross-functional and inter-connected teams.

- Running an in-house data labeling service is a volume-based operation. Project leaders should ask themselves, how much data will the team need to annotate? How long should this project last? After it’s finished, do we need a team of annotators to help us solve another problem, or should we recruit on short-term contracts?

- Companies making these calculations also need to assess whether extra office space is needed. Not only that but whether you will need to build or buy in specialist software and tools for annotation and data labeling projects? All of this increases the startup costs of putting together an annotation team.

- Image and video data annotation isn’t something you can dump on data science or engineering departments. They might have the right skills and tools. But, this is a project that requires a dedicated team. Especially when you factor in quality control, compliance considerations, and ongoing requests for new data to support the active learning process.

Even for experienced project leaders, this isn’t an easy call to make. In many cases, 6 or 7-figure budgets are allocated for machine learning and computer vision projects. Outcomes and outputs depend on the quality and accurate labeling of image and video annotation training datasets, and these can have a huge impact on a company, its customers, and stakeholders.

Hence the need to consider the other option: Should we consider outsourcing data annotation projects to a dedicated, experienced, proven data labeling service provider?

What is Outsourced Data Labeling?

Instead of recruiting an in-house team, many organizations generate a more effective return on investment (ROI) by partnering with third-party, professional, data annotation service providers.

Taking this approach isn’t without risk, of course. Outsourcing never is, regardless of what services the company outsources, and no matter how successful, award-winning, or large a vendor is. There’s always a danger something will go wrong. Not everything will turn out as you hoped.

However, in many cases, organizations in need of video and image annotation and data labeling services find the upsides outweigh the risks and costs of doing this in-house. Let’s take a closer look at the pros and cons of outsourced annotation and labeling.

What Are The Pros and Cons of Outsourcing Data Annotation?

Pros

- Reduced costs. Outsourcing doesn’t involve any of the financial and legal obligations of hiring and retaining (and providing benefits for) an in-house team of annotators. Every cost is absorbed by your data labeling and annotation service provider. Including office space and annotation software, tools, and technology. Also, many outsourced providers are based in lower-cost regions and countries, generating massive savings compared to recruiting a whole team in the US or Western Europe. Therefore, outsourcing software development projects can lead to significant cost and time savings.

- An on-demand partnership. Once a project is finished, you don’t need to worry about retaining a team when there’s nothing for them to do. An upside of this is, if there is more image and video annotation work in the pipeline, you can maintain a long-term relationship with a provider of your choosing, and return to them when you need them again.

- Upscale and downscale annotation capacity as required. If there’s a seasonal nature to your annotation project demands, then working with an outsourced provider can ensure you’ve got the resources when you need them.

- Quality control and benchmarking. Trusted and reliable outsourcing data annotation service providers know they are assessed on the quality of their work and annotation projects. External providers know they need to deliver high-quality, accurate annotations to secure long-term clients and repeat business. Professional companies should have their own quality control and benchmarking processes. Provided you’ve got in-house data science and ML experts, then you can also assess their work before training datasets are fed into machine learning models.

- Speed and efficiency. Recruiting and managing an in-house team takes time. With an outsourced partner, you can have a proof of concept (POC) project up and running quickly. An initial batch of annotated images and videos are usually delivered fairly quickly too, in comparison to the time it takes for an in-house team to get up to speed.

Cons

- Which is better, build vs. buy? There are upsides and downsides to both options. When outsourcing, you are buying annotation services, and therefore, have less control.

- Domain expertise. When you work with an external provider, they may not have the sector-specific expertise that you need. Medical and healthcare organizations need teams of annotators that have experience with medical imaging and video annotation datasets. Ideally, you need a provider who knows how to work with, annotate, and label different formats, such as DICOM or NIfTI.

- Teething problems and quality control. Working with an outsourced annotation provider involves trusting the provider to deliver, on time and within budget. Because the annotation team and process aren’t in your control, there’s always the risk of teething problems and poor-quality datasets being delivered. If that happens, project and ML leaders need to instruct the provider to re-annotate the images and videos to improve the accuracy and quality, and reduce any dataset problems, such as bias.

- Price considerations. Data annotation and labeling — for images, videos, and other datasets — is a competitive and commoditized market. Providers are often in less economically developed regions — South East Asia, Latin America, India, Africa, and Central & Eastern Europe (CEE) — ensuring that many of them offer competitive rates. However, you must remember that you get what you pay for. Cheaper doesn’t always mean better. When the quality and accuracy of dataset annotation work can have such a significant impact on the outcomes of machine learning and computer vision projects, you can’t risk valuing price over expertise, quality control, and a reliable process.

Now let’s review what you should look for in an annotation provider, and what you need to be careful of before choosing who to work with.

What to Look For in an Outsourced Annotation Provider?

Outsourcing data annotation is a reliable and cost-effective way to ensure training datasets are produced on time and within budget. Once an ML team has training data to work with, they can start testing a computer vision model. The quality, accuracy, and volume of annotated and labeled images and videos play a crucial role in computer vision project outcomes.

Consequently, you need a reliable, trustworthy, skilled, and outcome-focused data labeling service provider. Project leaders need to look for a partner who can deliver:

- High quality and levels of accuracy, especially when benchmarked against algorithmically-generated datasets;

- A provider with the right expertise and experience in your sector (especially when specialist skills are required, such as working with medical imaging datasets);

- An annotation partner that applies cutting-edge annotation best practices and automation tools as part of the process;

- An adaptable and responsive annotation partner. Project deadlines are often tight. Datasets might contain too many mistakes or too much bias, and need re-annotating, so you need to be confident an outsourced provider can handle this work.

- An annotation provider who can handle large dataset volumes without compromising timescales or quality.

What To Be Careful of When Choosing a Data Annotation Partner?

At the same time, ML and computer vision project leaders — those managing the outsourced relationship and budget — need to watch out for potential pitfalls.

Common pitfalls include:

- Annotators within the outsourcing provider teams who aren’t as skilled as others. Annotation is high-volume, mentally-taxing work, and providers often hire quickly to meet client dataset volume demands. When training is limited and the tools used aren’t cutting-edge, it could result in annotators who aren’t able to deliver in terms of accuracy or volume.

- Annotators can disagree with one another, either internally, or when labeled images and videos are sent to a client for review. It’s a red flag if there’s too much pushback and re-annotated datasets come back with limited quality or accuracy improvements.

- Watch out for the quality of annotated data providers delivers. Make sure you’ve got data scientists to run quality assurance (QA) and benchmarking processes before feeding data into machine learning models. Otherwise, poor-quality data is going to negatively impact the testing ability and outputs of computer vision models, and ultimately, whether or not an ML project solves the problems it’s tasked with solving.

Now let’s dive into the 7 best practice tips you need to know when working with an outsourced annotation provider.

7 Best Practice Tips For Working With an Annotation Outsourcing Company

Start Small: Commission a Proof of Concept Project (POC)

Data annotation outsourcing should always start with a small-scale proof of concept (POC) project, to test a new provider's abilities, skills, tools, and team. Ideally, POC accuracy should be in the 70-80 percentile range. Feedback loops from ML and data ops teams can improve the accuracy, and outcomes, and reduce dataset bias, over time.

Benchmarking is equally important, and we cover that and the importance of leveraging internal annotation teams shortly.

Carefully Monitor Progress

Annotation projects often operate on tight timescales, dealing with large volumes of data being processed every day. Monitoring progress is crucial to ensuring annotated datasets are delivered on time, at the right level of accuracy, and at the highest quality possible.

As a project leader, you need to carefully monitor progress against internal and external provider milestones. Otherwise, you risk data being delivered months after it was originally needed to feed into a computer vision model. Once you’ve got an initial batch of training data, it’s easier to assess a provider for accuracy.

Monitor and Benchmark Accuracy

When the first set of images or videos is fed into an ML/AI-based or computer vision model, the accuracy might be 70%. A model is learning from the datasets it receives. Improving accuracy is crucial. Computer vision models need larger annotated datasets with a higher level of accuracy to improve the project outcomes, and this starts with improving the quality of training data.

Some of the ways to do this are to monitor and benchmark accuracy against open source datasets, and imaging data your company has already used in machine learning models. Benchmarking datasets and algorithms are equally useful and effective, such as COCO, and numerous others.

Keep Mistakes & Errors to a Minimum

Mistakes and errors cost time and money. Outsourced data labeling providers need a responsive process to correct them quickly, re-annotating datasets as needed.

With the right tools, processes, and proactive data ops teams internally, you can construct customized label review workflows to ensure the highest label quality standards possible. Using an annotation tool such as Encord can help you visualize the breakdown of your labels in high granularity to accurately estimate label quality, annotation efficiency, and model performance.

The more time and effort you put into reducing errors, bias, and unnecessary mistakes, the higher level of annotation quality can be achieved when working closely with a dataset labeling provider.

Keep Control of Costs

Costs need to be monitored closely. Especially when re-annotation is required. As a project leader, you need to ensure costs are in-line with project estimates, with an acceptable margin for error. Every annotation project budget needs project overrun contingencies.

However, you don’t want this getting out of control, especially when any time and cost overruns are the faults of an external annotation provider. Agree on all of this before signing any contract, and ask to see key performance indicator (KPI) benchmarks and service level agreements (SLAs).

Measure performance against agreed timescales, quantity assurance (QA) controls, KPIs, and SLAs to avoid annotation project cost overruns.

Leverage In-house Annotation Skills to Assess Quality

Internally, the team receiving datasets from an external annotation provider needs to have the skills to assess images and video labels, and metadata for quality and accuracy. Before a project starts, set up the quality assurance workflows and processes to manage the pipeline of data coming in. Only once complete datasets have been assessed (and any errors corrected) can they be used as training data for machine learning models.

Use Performance Tracking Tools

Performance tracking tools are a vital part of the annotation process. We cover this in more detail next. With the right performance tracking tools and a dashboard, you can create label workflow tools to guarantee quality annotation outputs.

Clearly defined label structures reduce annotator ambiguity and uncertainty. You can more effectively guarantee higher-quality results when annotation teams use the right tools to automate image and video data labeling.

What Tools Should You Use to Improve Annotation Team Projects (In-house or Outsource)?

Performance Dashboards

Data operations team leaders need a real-time overview of annotation project progress and outputs. With the right tool, you can gain the insight and granularity you need to assess how an external annotation team is progressing.

Are they working fast enough? Are the outputs accurate enough? Questions that project managers need to ask continuously can be answered quickly with a performance dashboard, even when the annotators are working several time zones away.

Dashboards can show you a whole load of insights: a performance overview of every annotator on the project, annotation rejection and approval ratings, time spent, the volume of completed images/videos per day/team member, the types of annotations completed, and a lot more.

Example of the performance dashboard in Encord

Consensus Benchmarks

Annotation projects require consensus benchmarks to ensure accuracy. Applying annotations, labels, metadata, bounding boxes, classifications, keypoints, object tracking, and dozens of other annotation types to thousands of images and videos takes time. Mistakes are made. Errors happen.

Your aim is to reduce those errors, mistakes, and misclassifications as much as possible. To ensure the highest level of accuracy in datasets that are fed into computer vision models, benchmark datasets and other quality assurance tools can help you achieve this.

Annotation Training

When working with a new provider, annotation training and onboarding for tools they’re not familiar with is time well spent. It’s worth investing in annotation training as required, especially if you’re asking an annotation team to do something they’ve not done before.

For example, you might have picked a provider with excellent experience, but they’ve never done human pose estimation (HPE) before. Ensure training is provided at this stage to avoid mistakes and cost overruns later on.

Annotation Automation Features

Annotation projects take time. Thankfully, there are now dozens of ways to speed up this process. With powerful and user-friendly tools, such as Encord, annotation teams can benefit from an intuitive editor suite and automated features.

Automation drastically reduces the workloads of manual annotation teams, ensuring you see results more quickly. Instead of drawing thousands of new labels, annotators can spend time reviewing many more automated labels. For annotation providers, Encord’s annotate, review, and automate features can accelerate the time it takes to deliver viable training datasets.

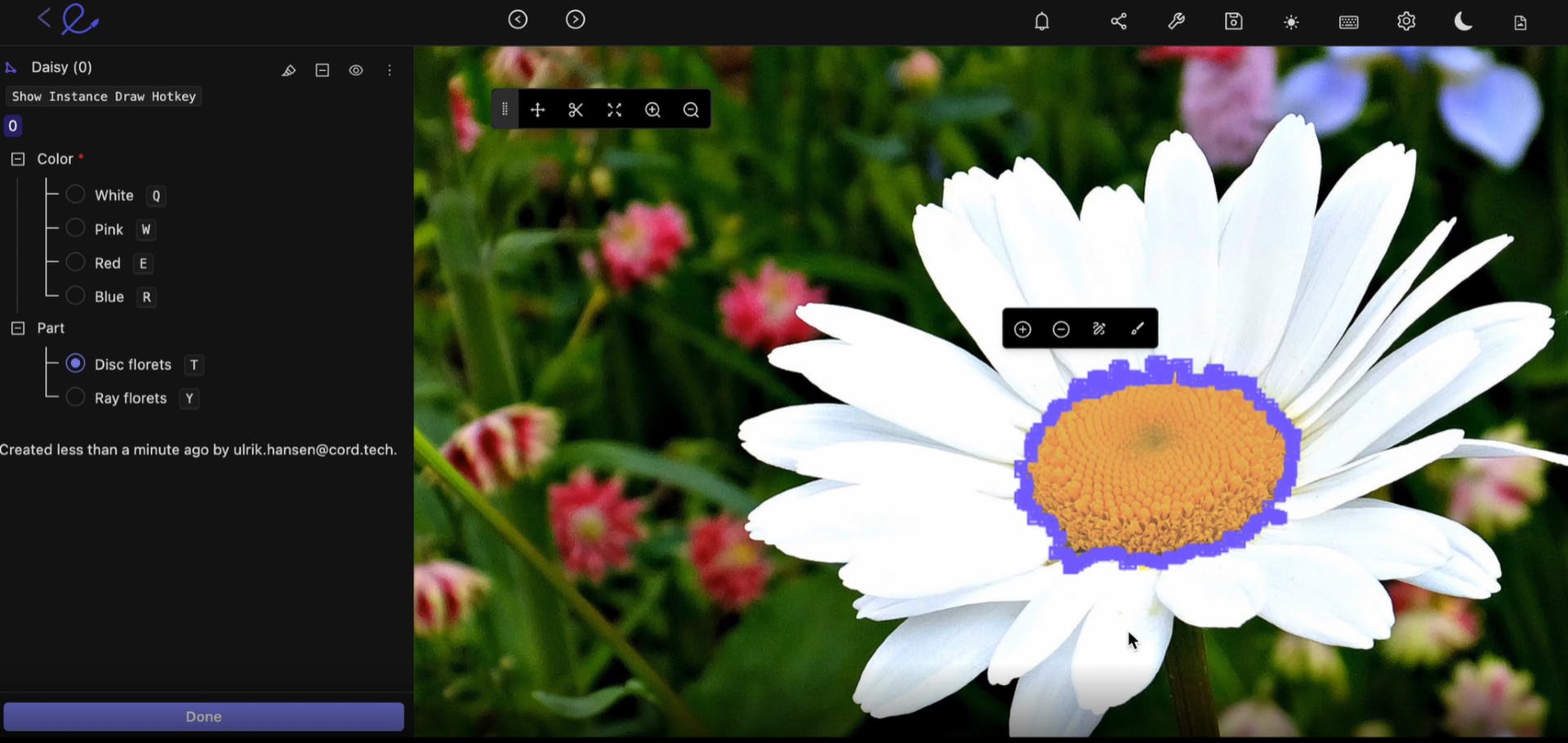

Automatic image segmentation in Encord

Flexible tools, automated labeling, and configurable ontologies are useful assets for external annotation providers to have in their toolkits. Depending on your working relationship and terms, you could provide an annotation team with access to software such as Encord, to integrate annotation pipelines into quality assurance processes and training models.

Summary and Key Takeaways

Outsource or keep image and video dataset annotation in-house? This a question every data operations team leader struggles with at some point. There are pros and cons to both options.

In most cases, the cost and time efficiency savings outweigh the expense and headaches that come with recruiting and managing an in-house team of visual data annotators. Provided you find the right partner, you can establish a valuable long-term relationship. Finding the right provider is not easy.

It might take some trial and error and failed attempts along the way. The effort you put in at the selection stage will be worth the rewards when you do source a reliable, trusted, expert annotation vendor. Encord can help you with this process.

Our AI-powered tools can also help your data ops teams maintain efficient processes when working with an external provider, to ensure image and video dataset annotations and labels are of the highest quality and accuracy.

Data Annotation Outsourcing Services for Computer Vision - FAQs

How Do You Know You’ve Found a Good Outsourced Annotation Provider?

Finding a reliable, high-quality outsourced annotation provider isn’t easy. It’s a competitive and commoditized market. Providers compete for clients on price, using press coverage, awards, and case studies to prove their expertise.

It might take time to find the right provider. In most cases, especially if this is your organization’s first time working with an outsourced data annotation company, you might need to try and test several POC projects before picking one.

At the end of the day, the quality, accuracy, responsiveness, and benchmarking of datasets against the target outcomes is the only way to truly judge whether you’ve found the right partner.

How to Find an Outsourced Annotation Partner?

When looking for an outsourced annotation and dataset labeling provider apply the same principles used when outsourcing any mission-critical service.

Firstly, start with your network: ask people you trust — see who others recommend — and refer back to any providers your organization has worked with in the past.

Compare and contrast providers. Read reviews and case studies. Assess which providers have the right experience, and sector-specific expertise, and appear to be reliable and trustworthy. Price needs to come into your consideration, but don’t always go with the cheapest. You might be disappointed and find you’ve wasted time on a provider who can’t deliver.

It’s often an advantage to test several at the same time with a proof of concept (POC) dataset. Benchmark and assess the quality and accuracy of the datasets each provider annotates. In-house data annotation and machine learning teams can use the results of a POC to determine the most reliable provider you should work with for long-term and high-volume imaging dataset annotation projects.

What Are The Long-term Implications of Outsourced vs. In-house Annotation and Data Labeling?

In the long term, there are solid arguments for recruiting and managing an in-house team. You have more control and will have the talent and expertise internally to deliver annotation projects.

However, computer vision project leaders have to weigh that against an external provider being more cost and time-effective. As long as you find a reliable and trustworthy, quality-focused provider with the expertise and experience your company needs, then this is a partnership that can continue from one project to the next.

At Encord, our active learning platform for computer vision is used by a wide range of sectors - including healthcare, manufacturing, utilities, and smart cities - to annotate 1000s of images and video datasets and accelerate their computer vision model development.

Ready to automate and improve the quality of your data annotations?

Sign-up for an Encord Free Trial: The Active Learning Platform for Computer Vision, used by the world’s leading computer vision teams.

AI-assisted labeling, model training & diagnostics, find & fix dataset errors and biases, all in one collaborative active learning platform, to get to production AI faster. Try Encord for Free Today.

Want to stay updated?

Follow us on Twitter and LinkedIn for more content on computer vision, training data, and active learning.

Frequently asked questions

Encord supports the entire data labeling to model evaluation process by allowing users to register their cloud data, curate, clean, and filter it within the platform. The workflow incorporates datasets, annotation projects, and ontologies to streamline the labeling process, and integrates models to enhance efficiency.

Yes, Encord can integrate with third-party human labelers and existing workflows, allowing teams to leverage external resources for labeling while maintaining control over the data preparation process. This flexibility supports various operational needs in building training datasets.

Encord emphasizes the collaboration between ML engineers and data labelers, recognizing that closer interaction can lead to better model improvements. This integration helps ensure that data labeling is aligned with the needs of the machine learning models being developed.

Encord helps customers prioritize their data by curating the most relevant samples for labeling, ensuring that only the best data is utilized. This targeted approach allows models to train more effectively without overwhelming human labelers with unnecessary data.

Encord offers insights into managing operational costs by analyzing annotation workflows and identifying areas for efficiency improvements. By controlling offshore costs and optimizing data handling, businesses can better manage their overall operational expenses.

Encord provides a comprehensive platform that supports data labeling for various formats including images, videos, text, and audio. This makes it a versatile one-stop solution for organizations looking to streamline their data annotation processes.

Encord provides a collaborative environment for labeling teams, facilitating real-time communication and coordination. This ensures that all team members can work together efficiently, resulting in faster and more accurate data annotation.

Absolutely, Encord is well-suited for teams that utilize external labelers. The platform facilitates collaboration between in-house teams and external annotators, ensuring a smooth workflow and consistent quality in data annotation.

Yes, Encord provides functionalities for label export, allowing users to download labels along with their associated metadata. This feature helps users maintain an organized dataset and facilitates further analysis.

Yes, Encord features automated export capabilities that facilitate seamless data and label transfers to your machine learning team, ensuring efficient collaboration and integration into your existing processes.