What Is Computer Vision In Machine Learning

Co-Founder & Co-CEO at Encord

Introduction

In 1956, John McCarthy, a young associate professor of mathematics, convened 10 mathematicians and scientists for a two-month study about “thinking machines”. The group decided to hold a summer workshop based on the assumption that if mathematicians and scientists could describe every aspect of learning in a way that enabled a machine to simulate it, then they could begin to understand how to make machines use language, form abstractions, and solve problems. Today, many computer scientists consider the resulting Dartmouth Summer Research Project on Artificial Intelligence the event that launched artificial intelligence (AI) as a field of study.

AI is now a wide-ranging branch of computer science (which includes deep learning and machine learning), but, overall, it still focuses on building “thinking” machines: machines capable of demonstrating intelligence by performing tasks and solving problems that previously required human knowledge to do so.

Because these thinking machines can’t yet think on their own, a fundamental aspect of AI is teaching machines to think. Much like a baby learning to make sense of the world around her, a computer must be taught to make sense of the data it’s given. A subdivision of AI called machine learning aims to teach computers how to make inferences from patterns within datasets, and ultimately, develop computer systems that can learn and adapt without explicit programming.

What is machine learning?

Machine learning uses algorithms, data, computer power, and models to train machines to learn from their experiences. Machine learning models make it possible for computers to continuously improve and learn from their mistakes

Machine learning engineers build these models from mathematical algorithms. Algorithms are a sequence of instructions that tell the computer how to transform data into useful information. Data scientists typically design algorithms to solve specific problems and then run the algorithms on data so that they can “learn” to recognise patterns.

In a general sense, a machine learning model is a representation of what an algorithm is “learning” from processing the data. After running an algorithm on data, machine learning engineers save the rules, numbers, and other algorithm-specific data structures needed to make predictions – all of which combined make up the model. The model is like a program made up of the data and the instructions for how to use that data to make a prediction (a predictive algorithm).

After using algorithms to design predictive models, data scientists must train the model by feeding it data and using human expertise to assess how well it makes predictions. The model combs through mountains of data, and – with the help of human feedback along the way – it learns to weigh diverse inputs. It uses these inputs to learn to identify patterns, categorise information, create predictions, make decisions, and more.

Machine learning is an interactive process, which means the model learns based on its past experiences, just like a human would. The machine learning model “remembers” what it learned from working with a previous dataset – where it performed well and where it didn’t – and it will use this feedback to improve its performance with future datasets. If needed, data scientists can tweak the algorithm that built the model to reduce errors in its outputs.

Unlike a computer system that acts based on a predefined set of rules, after being trained on data, a machine learning model can perform tasks without being explicitly programmed to do so. However, the quality of the data that data scientists use to train the machine directly impacts how well the machine learns (more on that below).

What is meant by computer vision?

Machine learning has many applications: it’s used in speech recognition, traffic predictions, virtual assistance, email filtering, and more.

At Encord, we help organisations using a type of machine learning called computer vision to create high-quality training data. Our platform automates data annotation, evaluation, and management. Because the training data directly impacts a computer vision model’s performance, having high-quality training data is incredibly important for the success of a computer vision model.

Computer vision is, to some extent, what its name implies: a field of AI that aims to help computers “see” the world around them. Computer vision models attempt to mimic the function of the human visual system by teaching the computer how to take in visual information, analyse it, and reach conclusions based on this analysis.

Data scientists have created, and continue to create, different machine learning models for different uses. For computer vision, a commonly used model is artificial neural networks.

Artificial neural networks (ANN) are computing systems inspired by the patterns of human brain cells and the ways in which biological neurons signal to one another. ANNs are made up of interconnected nodes arranged into a series of layers. An individual node often connects to several nodes in the layer below it, from which it receives data, and several in the layer above it, to which it sends data.

The input layer contains the initial dataset fed to the model, and it connects with the hidden, internal layers below. When data enters the hidden layers, each node performs a series of computations – multiplying and adding data together in complex ways – to transform it into a useful output and to determine whether it should pass the data onto the next layer. When this data reaches the output layer, the model takes what it’s learned and makes a prediction about the data.

Neural networks allow computers to process, analyse, and understand frames and videos, and they enable computers to extract meaningful information from the visual input in the way that humans do. Through the use of such models, a computer can interpret a visual environment and make decisions based on that input. However, unlike the human visual system that develops naturally over years, the computer has to be taught to “see” and make sense of a visual scene. Humans must train computer vision models to “see” by feeding them lots of high-quality data.

What are the different applications of computer vision?

Applications of computer vision vary depending on the type of problem the model is trying to solve, but some of the most common tasks are image processing and classification, object detection, and image segmentation.

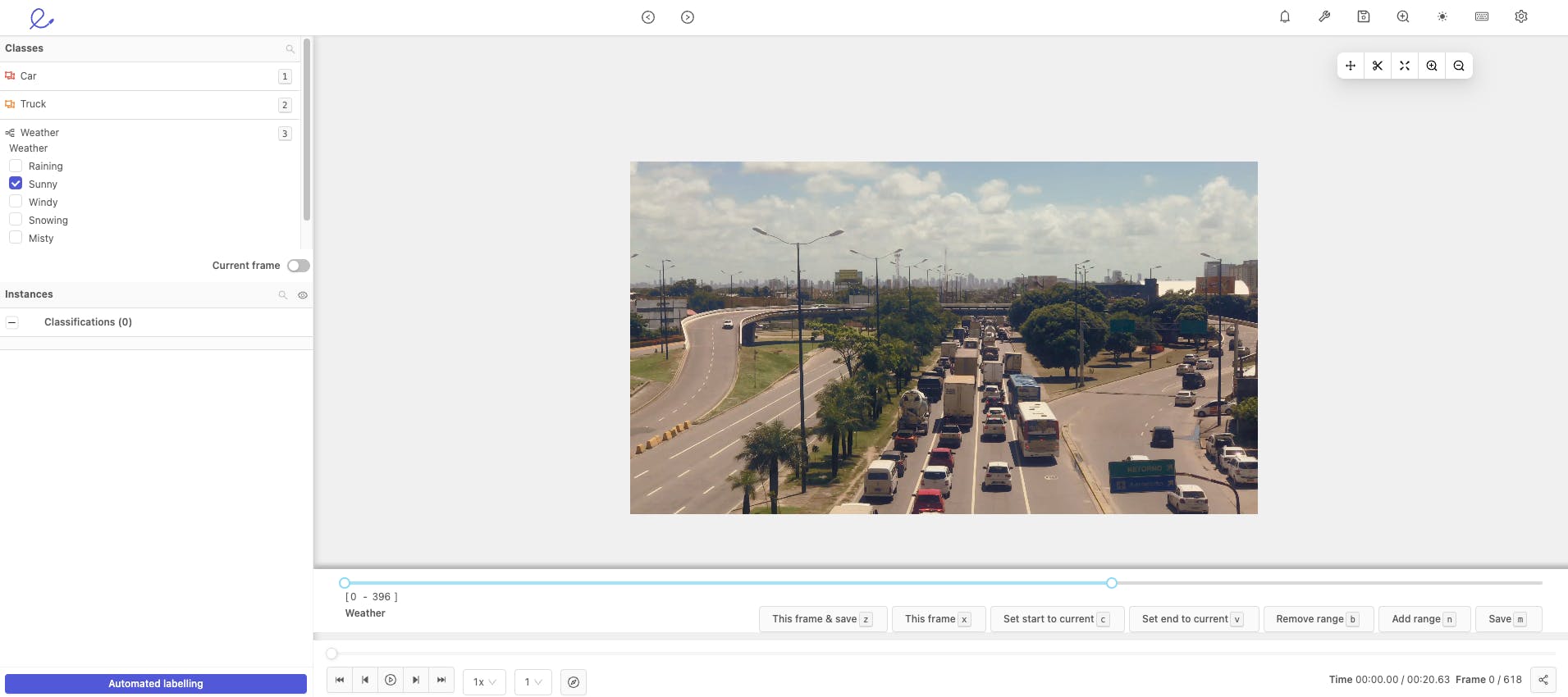

Example weather classification

Image classification is when a computer “sees” an image and can “categorise” it. Is there a house in this picture? Is this a picture of a dog or a cat? With a suitably trained image classification model, a computer can answer these questions.

When performing object detection, computer vision models learn to classify an object and detect its location. An object detection model could, for instance, identify that a car is in a video and track its movement from frame to frame.

Lastly, an image segmentation model distinguishes between an object and its background and other objects by creating a set of pixels for each object in the image. Compared to object detection, image segmentation provides a more granular understanding of the objects in an image.

Computer vision plays an important role in many industries. Consider the use of medical imaging in which doctors use AI to help them identify tumours. These computers have to learn to ‘see’ the tumours as distinct from other body tissue. Similarly, the computers running self-driving cars must be taught to “see” and avoid pedestrians and to process visual information to produce meaningful insight, such as identifying street signs and interpreting what they mean.

So who teaches a computer vision model to distinguish between a stop sign and a yield sign? Humans do, by creating a well-designed model and feeding it high-quality training data.

Interested in learning more? Schedule a demo to better understand how Encord can help your company unlock the power of AI.

Frequently asked questions

Encord provides a robust platform designed specifically for real-time annotations, enabling annotators to quickly and efficiently label data as it is being processed. This system allows for one-time write-only annotations, making it a straightforward solution for teams focused on immediate data labeling without the need for extensive editing or updates.

Encord's annotation platform is designed to streamline the process of labeling data for computer vision tasks. It supports various data types and provides tools for efficient collaboration among teams, ensuring high-quality annotations that are crucial for training accurate machine learning models.

Encord can help streamline the annotation process for machine vision systems by providing a scalable platform to manage and validate the data collected from various camera systems. This includes tools for tracking data and enabling better orientation detection through image segmentation.

Encord is equipped to handle multimedia data, including video and audio content, making it suitable for projects involving complex audio-visual annotations. The platform facilitates efficient labeling processes, which is crucial for training machine learning models in these domains.

Encord can indeed serve as an intermediary between machine learning algorithms and machine vision tools. This allows for seamless integration, enabling users to build machine learning models that can effectively analyze visual data for quality control and defect detection.

Encord works with both innovative AI startups and more traditional enterprises. We provide tailored support to help organizations integrate AI and computer vision into their existing structures, ensuring that they can adopt these technologies effectively and efficiently.

Yes, Encord is equipped to handle various computer vision tasks, including object counting and flow detection. The platform allows users to annotate and manage datasets effectively, which is essential for training models designed to perform these specific functions.

Yes, Encord is versatile and can effectively support both autonomous vehicle projects as well as a wide range of general computer vision applications. Its flexible annotation tools and workflows are designed to cater to the unique requirements of different projects.

Yes, Encord's technology can be integrated with other applications for video analysis, such as using vision LLMs to analyze videos and retrieve information. This integration allows for enhanced functionalities like video embedding and social media analysis.

Computer vision is a core component of Encord's platform, enabling the analysis and interpretation of visual data from sports events. This technology empowers teams to extract valuable insights and automate various processes within their workflows.