The Complete Guide to Image Annotation for Computer Vision

TL;DR: Image annotation is the human work behind almost every computer vision model, labelling images so a model can learn to recognise specific objects, patterns, or categories in production. This post covers what image annotation is actually for, how it differs from classification, the common annotation types, the challenges teams run into, and the best practices that separate datasets that ship from datasets that stall.

Behind every working computer vision model is a pile of carefully labelled images, and behind those, a team of annotators making thousands of small decisions about what each pixel represents. Image annotation is what turns raw visual data into something a model can actually learn from, whether the task is spotting a specific make and model of car across a dataset or flagging defects on a production line. Done well, it sets the ceiling for how good your model can get; done poorly, no amount of clever architecture or active learning will save you. Here's what to know before you start labelling.

What is Image Annotation?

Image annotation is the process of manually labeling and annotating images in a dataset to train artificial intelligence and machine learning computer vision models and is crucial to its success.

In machine learning, the data-centric AI approach recognizes the importance of the data a model is trained on, even more so than the model or set of models that are used.

So, if you’re an annotator working on an image or video annotation project, creating the most accurately labeled inputs can mean the difference between success and failure. Annotating images and objects within images correctly will save you a lot of time and effort later on.

Computer vision models and tools aren’t yet smart enough to correct human errors at the project's manual annotation and validation stage. Training datasets are more valuable when the data they contain has been correctly labeled.

As every annotator team manager knows, image annotation is more nuanced and challenging than many realize. It takes time, skill, a reasonable budget, and the right tools to make these projects run smoothly and produce the output data operations and ML teams and leaders need.

What is the Goal of Image Annotation?

The goal of image annotation is to produce accurately labelled images that train a computer vision model to recognise specific objects, patterns, or categories. Labelled images form the training dataset that the model learns from early in a project. A model trained on the first batch might reach around 70% accuracy, and ML teams iterate by adding more annotated data to push that number higher.

Annotation can be done in two ways:

- Manually, where a human labels each image one by one. This is slow and scales poorly with dataset size, but remains essential for tasks requiring domain expertise or subjective judgement (e.g. medical imaging).

- With automation, using techniques like active learning and model-assisted pre-labeling. These methods select the most informative data points to label, reducing manual effort without sacrificing accuracy.

In practice, the best annotation workflows combine both, automation handles the volume, humans handle the edge cases and quality control.

Image Annotation in Machine Learning

Image annotation in machine learning is the process of labeling or tagging an image dataset with annotations or metadata, usually to train a machine learning model to recognize certain objects, features, or patterns in images.

Image annotation is an important task in computer vision and machine learning applications, as it enables machines to learn from the data provided to them. It is used in various applications such as object detection, image segmentation, and image classification. We will discuss these applications briefly and use the following image on these applications to understand better.

1. Object detection

Object detection is a computer vision technique that involves detecting and localizing objects within an image or video. The goal of object detection is to identify the presence of objects within an image or video and to determine their spatial location and extent within the image. Annotations play a crucial role in object detection as they provide the labeled data for training the object detection models. Accurate image annotations help to ensure the quality and accuracy of the model, enabling it to identify and localize objects accurately. Object detection has various applications such as autonomous driving, security surveillance, and medical imaging.

2. Image classification

Image classification is the process of categorizing an image into one or more predefined classes or categories. Image annotation is crucial in image classification as it involves labeling images with metadata such as class labels, providing the necessary labeled data for training computer vision models. Accurate image annotations help the model learn the features and patterns that distinguish between different classes and improve the accuracy of the classification results. Image classification has numerous applications such as medical diagnosis, content-based image retrieval, and autonomous driving, where accurate classification is crucial for making correct decisions.

3. Image segmentation

Image Segmentation is the process of dividing an image into multiple segments or regions, each of which represents a different object or background in the image. The main goal of image segmentation is to simplify and/or change the representation of an image into something more meaningful and easier to analyze. There are three types of image segmentation techniques:

4. Instance segmentation

Instance segmentation is a technique that involves identifying and delineating individual objects within an image, such that each object is represented by a separate segment. In instance segmentation, every instance of an object is uniquely identified, and each pixel in the image is assigned to a specific instance. It is commonly used in applications such as object tracking, where the goal is to track individual objects over time.

5. Semantic segmentation

Semantic Segmentation It involves labeling each pixel in an image with a specific class or category, such as “person”, “cat”, or “unicorn”. Unlike instance segmentation, semantic segmentation does not distinguish between different instances of the same class. The goal of semantic segmentation is to understand the content of an image at a high level, by separating different objects and their backgrounds based on their semantic meaning.

6. Panoptic segmentation

Panotopic Segmentation is a hybrid of instance and semantic segmentation, where the goal is to assign every pixel in an image to a specific instance or semantic category. In panoptic segmentation, each object is identified and labeled with a unique instance ID, while the background and other non-object regions are labeled with semantic categories. The main goal is to provide a comprehensive understanding of the content of an image, by combining the advantages of both instance and semantic segmentation.

💡 To learn more about image segmentation, read Guide to Image Segmentation in Computer Vision: Best Practices

💡 To learn more about image segmentation, read Guide to Image Segmentation in Computer Vision: Best Practices What is the Difference Between Classification and Annotation in Computer Vision?

Although classification and annotation are both used to organize and label images to create high-quality image data, the processes and applications involved are somewhat different.

Image classification is usually an automatic task performed by image labeling tools.

Image classification comes in two flavors: “supervised” and “unsupervised”. When this task is unsupervised, algorithms examine large numbers of unknown pixels and attempt to classify them based on natural groupings represented in the images being classified.

Supervised image classification involves an analyst trained in datasets and image classification to support, monitor, and provide input to the program working on the images.

On the other hand, Annotation in computer vision models always involves human annotators. At least at the annotation and training stage of any image-based computer vision model. Even when automation tools support a human annotator or analyst, creating bounding boxes or polygons and labeling objects within images requires human input, insight, and expertise.

What Should an Image Annotation Tool Provide?

Before we get into the features annotation tools need, annotators and project leaders need to remember that the outcomes of computer vision models are only as good as the human inputs. Depending on the level of skill required, this means making the right investment in human resources before investing in image annotation tools.

When it comes to picking image editors and annotation tools, you need one that can:

- Create Flexible Labels Across Every image annotation Type

- Create frame-level and object classifications

- And comes with a wide range of powerful automation features.

While there are some fantastic open-source image annotation tools out there (like CVAT), they don’t have this breadth of features, which can cause problems for your image labeling workflows further down the line. Now, let’s take a closer look at what this means in practice.

Flexible Labels Across Every Annotation Type:

A good annotation interface provides teams the freedom to label any image type without hitting tool limitations. At minimum, annotators need access to the four most common annotation types, bounding boxes, polygons, polylines, and keypoints, plus the ability to add detailed labels and metadata on top.

Getting this right at the setup stage pays off downstream: richer, more accurate labels mean faster, more reliable results once the data hits your computer vision model.duce more accurate and faster results when computer vision AI models process the data and images.

Coverage of the Three Core CV Tasks: Classification, Detection, Segmentation:

A strong annotation tool should support all three foundational computer vision tasks:

- Classification applies nested or higher-order classes to individual images or entire series. It's widely used in self-driving cars, traffic surveillance, and visual content moderation.

- Object detection recognises and localises objects within an image using vector labels. Once an object is labelled a few times in training, automated tools can replicate the labels across large volumes of images. Common applications include medical imaging (e.g. gastroenterology), retail, and drone surveillance.

- Segmentation assigns a class to each pixel (or group of pixels) using segmentation masks. It's especially valuable in medical fields like stroke detection and microscopy pathology, as well as retail use cases such as virtual fitting rooms.

Model-Assisted Pre-Annotation and Automation:

Automation features turn annotation from a manual bottleneck into a review workflow. The biggest unlock is model-assisted pre-annotation: training a model on your existing labelled data, then using its predictions to pre-label new images so annotators only need to correct mistakes rather than start from scratch.

This approach delivers three compounding benefits:

- Speed: annotators review and refine instead of labelling every object from zero

- Cost: fewer human hours per image at the same (or better) accuracy

- Consistency: the model applies the same logic across the dataset, reducing inter-annotator variability

Look for tools that also let you import model predictions programmatically, so you can plug your own models into the loop and accelerate project delivery end-to-end.

What are the Most Common Types of Image Annotation?

There are four most commonly used types of image annotations: bounding boxes, polygons, polylines, key points, and we cover each of them in more detail here:

1. Bounding Box

Drawing a bounding box around an object in an image such as an apple or tennis ball, is one of several ways to annotate and label objects. With bounding boxes, you can draw rectangular boxes around any object, and then apply a label to that object. The purpose of a bounding box is to define the spatial extent of the object and to provide a visual reference for machine learning models that are trained to recognize and detect objects in images. Bounding boxes are commonly used in applications such as object detection, where the goal is to identify the presence and location of specific objects within an image.

2. Polygon

A polygon is another annotation type that can be drawn freehand. On images, these annotation lines can be used to outline static objects, such as a tumor in medical image files.

3. Polyline

A polyline is a way of annotating and labeling something static that continues throughout a series of images, such as a road or railway line. Often, a polyline is applied in the form of two static and parallel lines. Once this training data is uploaded to a computer vision model, the AI-based labeling will continue where the lines and pixels correspond from one image to another.

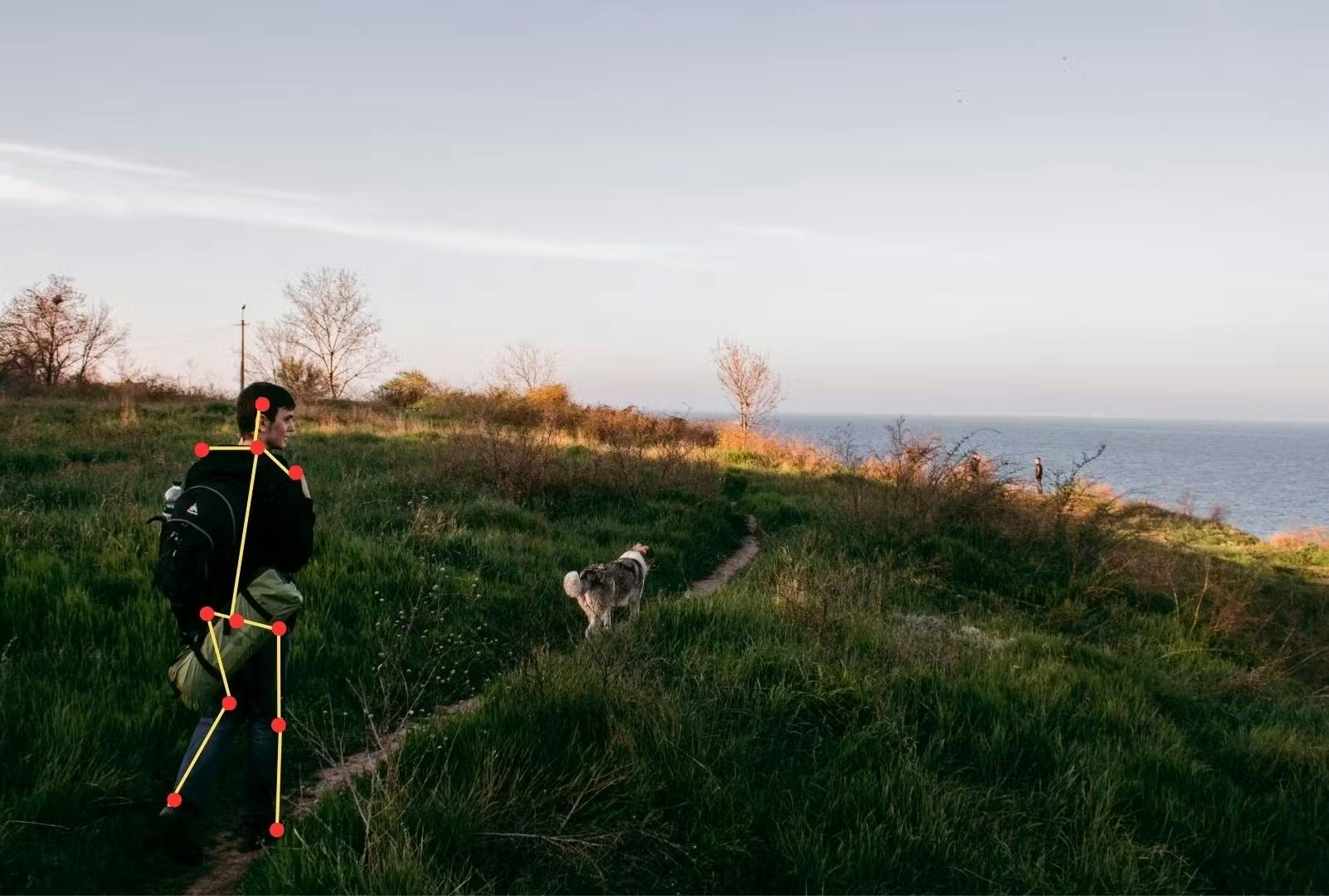

4. Keypoints

Keypoint annotation involves identifying and labeling specific points on an object within an image. These points, known as keypoints, are typically important features or landmarks, such as the corners of a building or the joints of a human body. Keypoint annotation is commonly used in pose estimation, action recognition, and object tracking, where the labeled keypoints are used to train machine learning models to recognize and track objects in images or videos. The accuracy of keypoint annotation is critical for these applications' success, as labeling errors can lead to incorrect or unreliable results.

What are the common challenges in the Image Annotation Process?

While image annotation is crucial for many applications, such as object recognition, machine learning, and computer vision, it can be challenging and time-consuming.

Here are some of the main challenges in the image annotation process:

1. Maintaining Consistency Across Ambiguous and Complex Images

ML models need consistent, high-quality labels to make accurate predictions but ambiguity and complexity in images make consistency hard.

- Ambiguous images can be interpreted multiple valid ways. An image of a bird sitting on a dog could be labelled "dog", "bird", or both and different annotators will make different calls.

- Complex images contain dozens or hundreds of objects that all need labelling. A crowded street scene might include people, cars, traffic signs, and buildings, each requiring its own annotation.

The fix is an ontology; a formal structure that defines the labels, classes, and relationships annotators are allowed to use. By forcing everyone on the team to reference the same vocabulary and rules, ontologies cut subjectivity at the source and keep labelling consistent across the dataset, no matter who's annotating or how messy the image is.

2. Reducing Inter-annotator variability

Image annotation is subjective, one annotator might call an object a "chair", another might call it a "stool". Left unchecked, this variability degrades dataset quality and shows up downstream as inconsistent model performance.

The most effective fix is rigorous annotation guidelines paired with annotator training. When everyone on the team works from the same labelling criteria, edge-case decisions become repeatable instead of random.

Tesla offers a well-known example: at AI Day 2021, the team revealed they follow an 80-page annotation guide for their self-driving car program. That level of documentation may sound excessive, but it's what keeps thousands of annotators producing labels consistent enough to train a model that has to make safety-critical decisions on the road.

3. Balancing Annotation Cost Against Accuracy

High-accuracy annotation requires trained annotators, specialised tools, and quality control workflows, all of which add up fast on large datasets. The question isn't "how do we get perfect labels?" but "how much accuracy does this specific use case actually need?"

A surgical imaging model and a coarse image classifier sit at opposite ends of this trade-off. Pixel-perfect labels matter for the former; broad class accuracy is fine for the latter. Spending the same per-image budget on both wastes resources on one and under-invests in the other.

In practice, teams strike the balance by combining a few tactics:

- Prioritise critical data: Invest accuracy budget on the images that most influences model performance

- Blend automated and manual annotation: Let models handle volume, humans handle edge cases

- Outsource selectively: Use specialised providers for high-skill tasks (e.g. medical imaging)

- Iterate on the process: review label quality regularly and refine guidelines as the dataset grows

Choosing the Right Annotation Tool

The right tool depends on what you're annotating, how big your dataset is, and how much quality control you need. Most tools fall short on at least one of these dimensions, which is why "suitable" varies so much team-to-team.

A few criteria worth weighing before you commit:

- Task coverage: Does the tool support the annotation types your project needs (bounding boxes, polygons, segmentation masks, keypoints)?

- Ease of use: Annotators ramp faster on tools with clean UX; complex interfaces slow throughput and increase errors

- Cost structure: Free open-source tools work for small projects but often lack the automation and QC features that pay for themselves at scale

- Compatibility: The tool should integrate with your existing data formats and ML pipeline

- Scalability: Can it handle millions of images without breaking?

- Quality control: Look for inter-annotator agreement metrics, review workflows, and the ability to correct annotations in bulk

If you're evaluating options, we've put together a curated list of the best image annotation tools for computer vision.

What are the best Practices for Image Annotation for Computer Vision?

1. Ensure raw data (images) are ready to annotate

At the start of any image-based computer vision project, you need to ensure the raw data (images) are ready to annotate. Data cleansing is an important part of any project. Low-quality and duplicate images are usually removed before annotation work can start.

2. Understand and apply the right label types

Annotators need to understand and apply the right types of labels, depending on what an algorithmic model is being trained to achieve. If an AI-assisted model is being trained to classify images, class labels need to be applied. However, if the model is being trained to apply image segmentation or detect objects, then the coordinates for boundary boxes, polylines, or other semantic annotation tools are crucial.

3. Create a class for every object being labeled

AI/ML or deep learning algorithms usually need data that comes with a fixed number of classes. Hence the importance of using custom label structures and inputting the correct labels and metadata, to avoid objects being classified incorrectly after the manual annotation work is complete.

Annotate with a powerful user-friendly data labeling tool

Once the manual labeling is complete, annotators need a powerful user-friendly tool to implement accurate annotations that will be used to train the AI-powered computer vision model. With the right tool, this process becomes much simpler, cost, and time-effective.

Annotators can get more done in less time, make fewer mistakes, and have to manually annotate far fewer images before feeding this data into computer vision models.

Key Takeaways: Image Annotation for Computer Vision

Image annotation sets the ceiling on what a computer vision model can achieve, no architecture or active learning loop will rescue a poorly labelled dataset. We've covered what image annotation actually is and how it differs from classification, the four most common annotation types (bounding boxes, polygons, polylines, and keypoints), and how they map to object detection, image segmentation, and image classification tasks. We've also walked through the main challenges teams hit, inconsistent data, inter-annotator variability, balancing cost with accuracy, and choosing the right tool, alongside the best practices that keep annotation projects on track: cleaning raw data before labelling, applying the right label types for the task, and creating a class for every object.

If you're looking for an image annotation tool that handles every label type, scales with automation features like model-assisted pre-labelling, and gives you the ontology controls to keep annotations consistent across your team, get in touch to request a trial of Encord.

Frequently asked questions

Encord provides solutions to tackle the complexities associated with annotating and curating low-quality images, like those often found in property listings. The platform enables users to create high-quality training labels, making it easier to define what constitutes an appealing room, thus enhancing the overall data quality.

Encord offers a range of annotation services specifically tailored for satellite imagery, including curation, prioritization, and detailed annotation tasks. These services are designed to enhance the overall efficiency and effectiveness of data labeling for geospatial projects.

Encord includes features that streamline the annotation process, helping users work more efficiently. These features are designed to minimize disruptions and enhance productivity, allowing users to focus on their tasks without the frustrations common in other annotation tools.

Encord employs advanced techniques to identify out-of-distribution images that can have the most impact on model performance. This targeted approach ensures that the annotation process focuses on data that will enhance the overall effectiveness of machine learning models.

Encord supports geotiff formats that contain multiple spectral bands, allowing users to annotate images that have been purchased or sourced from archives. The platform is designed to handle high-resolution imagery effectively, making it suitable for detailed analysis.

Encord supports various annotation types for satellite data, including bounding boxes for objects like ships and annotations for specific features such as clouds in images. This flexibility enables precise data preparation for machine learning tasks.

Encord includes features that facilitate the curation of images, ensuring that your annotation team can efficiently manage and organize tasks. This helps in reducing the manual effort involved in selecting and preparing images for annotation.

Yes, Encord allows users to select and annotate specific subsets of images based on heuristics or particular issues, such as camera calibration. This targeted approach helps in addressing unique challenges within the training process.

Users can add custom metadata to images in Encord, such as the date the image was captured or the location of the vending machine. This metadata assists in better filtering and organizing datasets for training.

Encord prefers using uncompressed image formats such as TIFF and PNG for high-quality annotation. These formats maintain the integrity of the data, which is crucial for precise pixel-level analysis.